I have been watching AI evolve quickly, but this week felt like a real shift. Systems are no longer just assisting with tasks. They are starting to execute them end-to-end across coding, devices, and visual understanding. What stood out to me most is how different companies are converging on the same idea: AI that does work, not just suggests it.

AI Agents Enter Software Development

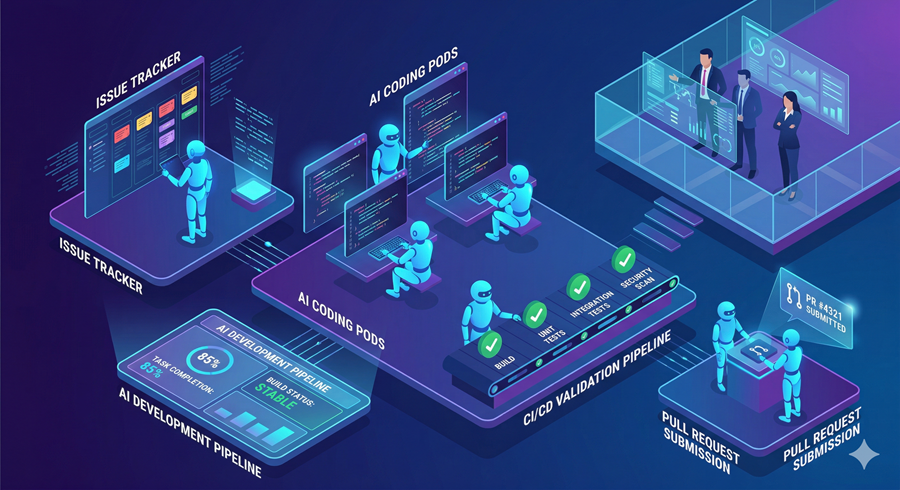

I see a new model of software development emerging in which AI agents are assigned tasks directly from issue trackers, rather than humans performing each step manually. With systems like Symphony, an AI can pick a task, enter an isolated workspace, write code, run tests, and submit a pull request once everything passes validation. Every run is monitored, and the agent must produce proof through testing and documentation before the work is merged.

Phone-Level Intelligence Takes Over Devices

I now see a shift where AI is moving from apps into the operating system itself, controlling devices at a deeper level. In systems like Xiaomi MClaw, the AI can interpret intent and automatically coordinate phone functions, apps, and smart home devices. It breaks tasks into steps, selects tools, and continuously updates its plan while remembering long contexts. This turns the phone into an agent that can read messages, schedule events, and even manage household systems.

A New Kind of Multimodal Vision Model

Microsoft’s new compact 15B model shows that vision and reasoning systems do not need to be massive to be powerful. It processes images and text together, allowing it to interpret screenshots, documents, and charts with strong accuracy. A mixed training approach helps it balance fast perception tasks with deeper reasoning when required. This makes it effective for both scientific problem solving and computer-aided automation.

What This Shift Actually Means

Across these systems, the common thread is clear: AI is moving from suggestion to execution. Whether in coding, personal devices, or vision tasks, AI is increasingly acting independently with minimal supervision. This raises both opportunity and responsibility, as systems become more capable and more embedded in daily life.

Closing

What stands out most to me is not any single release, but the speed at which all these capabilities are converging. We are entering a phase where AI systems do not just assist work, they actively perform it across software, devices, and perception. The direction is clear, even if the final outcome is still being written.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube