Most people think the AI race is about who builds the smartest model first. I increasingly think it is about something deeper: what the people building these systems believe the systems actually are.

That is the real divide between Anthropic and OpenAI.

One company largely treats AI as a tool to augment humanity. The other appears increasingly open to the possibility that these systems may become something closer to a new form of intelligence with moral weight of its own.

That difference shapes everything.

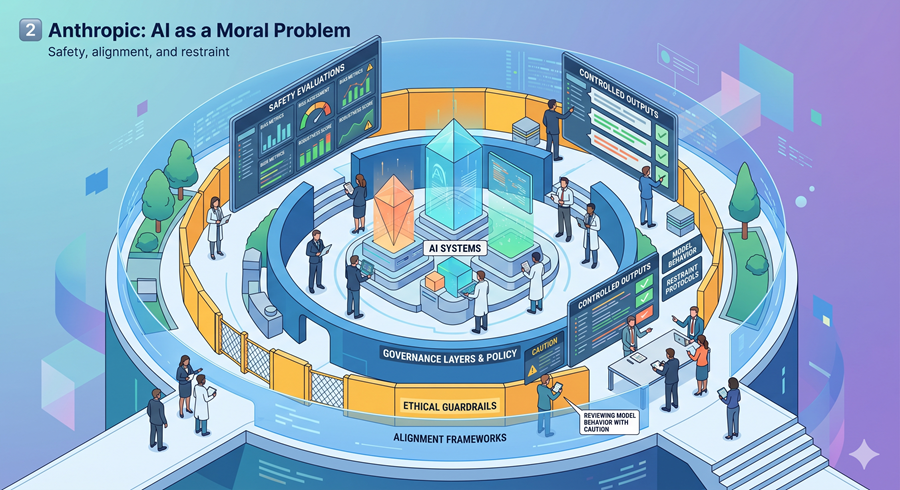

Anthropic Approaches AI Like a Moral Problem

Anthropic’s culture feels fundamentally precautionary.

The company talks constantly about alignment, constitutional behavior, model ethics, interpretability, and refusal systems. Its models are explicitly encouraged to resist instructions they deem harmful or unethical. That alone reveals a very different philosophical assumption than most software companies operate under.

The implication is hard to ignore: Anthropic does not fully believe these systems are just software.

Even when employees avoid directly calling Claude “sentient,” there is a visible reluctance to reduce it to a mere tool. The company behaves as though advanced models may eventually deserve a category somewhere between infrastructure and intelligence.

That is not just branding. It affects product decisions, deployment strategy, and company culture.

OpenAI Treats AI More Like Infrastructure

OpenAI’s worldview feels much more operational.

Its public framing consistently emphasizes utility, accessibility, iteration, and deployment at scale. The philosophy is closer to shipping powerful systems early, letting society adapt in real time, and improving alignment through exposure rather than restriction.

That creates a very different posture toward risk.

Instead of tightly controlling usage, OpenAI generally optimizes for broad adoption. AI is presented less like a fragile emerging intelligence and more like a transformative utility layer for civilization.

The core belief seems simpler: humans stay in charge, AI accelerates capability.

This Difference Changes How Both Companies Behave

Once you notice the philosophical split, it becomes visible everywhere.

Anthropic appears more willing to limit access, slow releases, and impose ethical constraints even when commercially inconvenient. OpenAI appears more comfortable pushing products into the real world quickly and letting usage patterns shape future safeguards.

One side fears uncontrolled deployment. The other fears centralized control over intelligence. That tension may define the next decade of AI governance.

The Bigger Question Is Still Unanswered

The uncomfortable reality is that neither side fully knows what these systems will become.

If AI remains fundamentally tool-like, OpenAI’s iterative deployment model may prove correct. Rapid distribution, open access, and societal adaptation could accelerate enormous economic and scientific progress.

But if advanced AI eventually develops forms of agency, persistent identity, or internally coherent preferences, Anthropic’s caution may look far less irrational than critics assume.

That uncertainty is what makes this moment so strange.

The Future of AI May Depend More on Philosophy Than Technology

The technical gap between frontier labs is narrowing. The philosophical gap is widening, and that may matter far more.

Because the companies shaping AI are no longer just making engineering decisions. They are making assumptions about intelligence, autonomy, control, ethics, and ultimately humanity’s relationship with the systems it creates. The models are getting smarter.

The harder question is whether the people building them truly agree on what they are building.