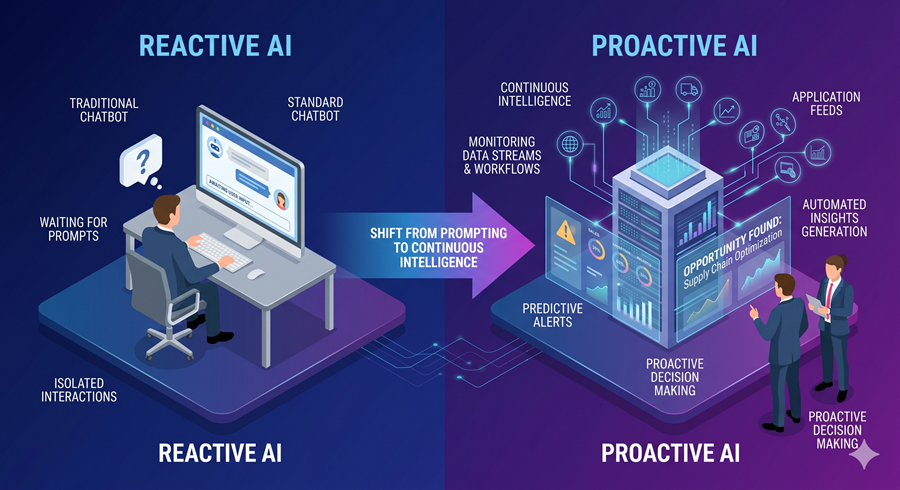

For the last two years, most AI products have behaved the same way: I ask, the model responds. The interaction has been fundamentally reactive. Even the most advanced AI systems still depend on human initiation.

That pattern is starting to change.

The latest wave of AI development is no longer focused only on faster responses or stronger reasoning. The bigger shift is toward proactive systems that monitor context, surface insights automatically, and increasingly act without being prompted every step of the way.

The Rise of Proactive AI

One of the clearest signs of this transition is the emergence of assistants designed to work across connected applications. Instead of functioning like isolated chatbots, these systems pull information from email, Slack, documents, design tools, and internal workflows to generate ongoing briefings and recommendations.

That matters because proactive AI changes the relationship between humans and software. A reactive model waits for instructions. A proactive one watches for patterns, identifies useful context, and decides when information becomes relevant enough to surface on its own.

At the moment, most of these systems are still limited to summaries, alerts, and contextual insights. But strategically, that restraint makes sense. Autonomous action requires trust, and trust is much harder to scale than model intelligence.

Why Speed Is Becoming a Competitive Advantage

At the same time, model providers are doubling down on lightweight systems optimized for daily use. Faster “flash” or “instant” models are becoming increasingly important because most users do not need maximum reasoning power for every task.

The real product challenge is no longer just intelligence. It is responsiveness, efficiency, and usability at scale.

For everyday workflows, fast models reduce friction. They make AI feel less like a special tool and more like infrastructure embedded into routine work. That subtle shift is what drives adoption.

Personalization Is Becoming the Real Product Layer

Another major shift is memory.

AI systems are beginning to retain context across conversations, files, and connected applications in ways that make interactions feel continuous rather than isolated. The important development is not just that models remember information, but that users are gaining visibility into how that memory influences outputs.

That transparency matters, especially in high-trust environments like law, medicine, and finance, where hallucinations and inaccurate claims carry real consequences.

As models become more reliable, personalization stops feeling cosmetic and starts becoming operational.

AI Agents Are Coming for Structured Knowledge Work

The clearest example of this trend is happening in financial services.

New AI agent frameworks are now targeting work traditionally handled by junior analysts: pitch preparation, financial modeling, reconciliations, compliance reviews, and market research. These are highly structured workflows built around repetitive information processing, which makes them ideal targets for automation.

I do not think this immediately replaces professionals. But it absolutely changes the value of entry-level work.

For years, tedious operational tasks were treated as training grounds. AI is now compressing that layer of experience. The firms that adopt these systems successfully will likely expect smaller teams to produce significantly more output.

That may become the defining workplace shift of the AI era.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube