For a long time, the future of AI looked centralized.

The assumption was simple: larger models would require larger data centers, which meant ordinary users would become increasingly dependent on a handful of companies with enough capital to train and serve frontier systems at scale.

But while attention stayed fixed on cloud AI, a parallel movement quietly accelerated underneath it.

Researchers, open-source developers, and deeply technical hobbyists started building models that could run locally on consumer hardware. What began as an experiment has now become one of the most important structural shifts happening in AI.

The Leak That Changed Everything

The turning point came when an early large language model unexpectedly escaped into the open internet. Within days, independent developers began adapting it to run outside enterprise infrastructure.

One engineer, originally working in medical physics and cancer-targeting software, moved faster than almost anyone else. Using a lightweight tensor library written in pure C, he created an inference engine capable of running language models directly on CPUs while introducing aggressive quantization techniques that dramatically reduced memory requirements.

That single breakthrough changed accessibility overnight.

Suddenly, powerful AI models were no longer limited to massive GPU clusters. They could run on laptops, phones, and eventually even low-power devices.

Why Apple Accidentally Became Important to AI

One of the more interesting twists is that local AI benefited from technology that was never designed for machine learning in the first place.

Unified memory architecture, originally optimized to improve video editing workflows, turned out to solve one of AI’s biggest hardware bottlenecks: moving large amounts of memory between CPU and GPU efficiently.

That is why Apple Silicon became unexpectedly popular among local AI developers. High-bandwidth shared memory made it possible to run surprisingly capable models without building expensive multi-GPU systems.

For many users, a high-memory desktop now delivers enough performance for real daily AI workloads.

Local Models Are Finally Becoming Useful

What surprised me most is not that local models work. It is how close they now feel to frontier systems in practical tasks.

The newest generation can already handle coding assistance, summarization, reasoning, image understanding, and front-end design with surprising competence. They are still weaker on complex debugging and deep reasoning problems, but the gap is narrowing faster than many people expected.

What matters is not whether local models outperform frontier systems today. They do not.

What matters is that they crossed the threshold from “interesting demo” to “genuinely useful tool.”

That changes adoption completely.

The Hybrid AI Future Is Probably Inevitable

The economics of AI are also pushing the industry in this direction.

Right now, frontier models feel affordable because companies are aggressively subsidizing usage to gain market share. But long-term, constantly routing every workflow through expensive cloud infrastructure becomes difficult to sustain.

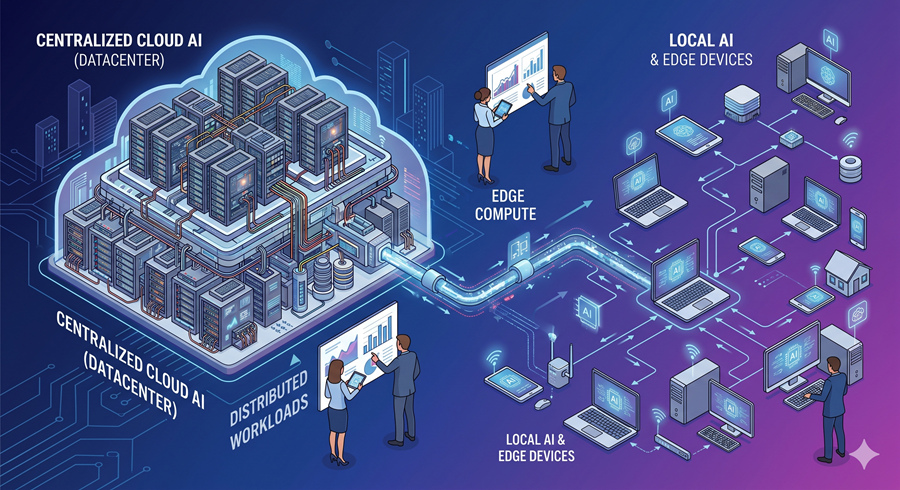

The more practical future is hybrid AI.

Local models will likely handle most repetitive, private, and lightweight tasks, while frontier systems are reserved for difficult reasoning or specialized workloads. That approach lowers costs, improves privacy, and reduces dependence on centralized platforms.

For the first time in years, AI development feels decentralized again, and that may end up being more important than the models themselves.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube