For a while, it felt like Frontier AI was becoming impossible to catch.

Every meaningful breakthrough seemed locked behind billion-dollar infrastructure, proprietary systems, and increasingly expensive subscriptions. The assumption was that only the largest labs could afford to push the boundaries of intelligence.

Then DeepSeek 4 arrived with a one-million token context window, open weights, dramatically lower inference costs, and benchmark performance close to frontier systems released just months ago.

That combination changes the conversation entirely.

The Real Breakthrough Is Memory Efficiency

Most people focus on model size or benchmark scores. I think the more important innovation here is memory architecture.

Large context windows are usually extremely expensive because models must continuously track and retrieve massive amounts of information while generating responses. DeepSeek tackles that problem through layered compression strategies that reduce KV-cache memory requirements by roughly 90%.

The idea is surprisingly intuitive.

First, the model compresses detailed information into lightweight summaries. Then it creates structural abstractions similar to a table of contents. Finally, it uses sparse indexing to identify the most relevant locations when retrieving information later.

Instead of treating every token equally, the model learns how to prioritize attention hierarchically.

That is what makes million-token context windows economically viable.

Why This Feels Bigger Than Benchmarks

What makes this release important is not just technical performance. It is accessibility.

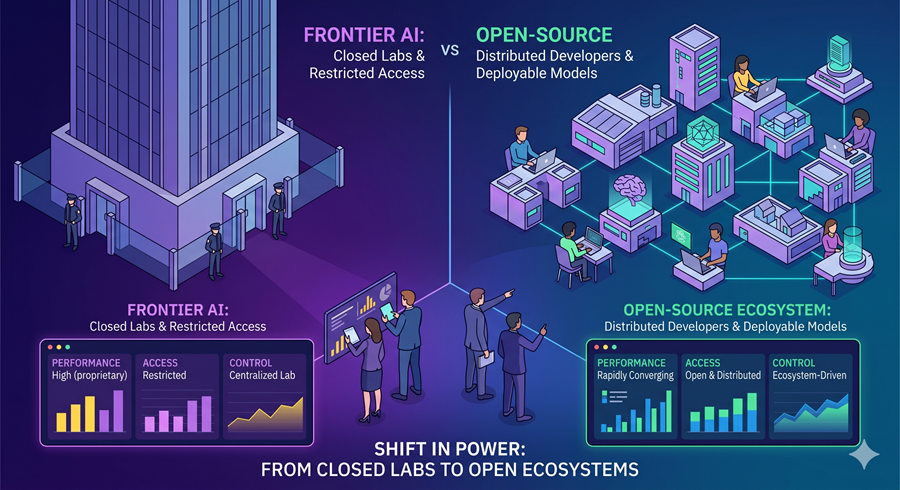

Capabilities that recently required premium frontier systems are now appearing in open models that developers can inspect, modify, and deploy themselves. Long-context reasoning, coding, retrieval, and large-document analysis are no longer exclusive features controlled by a handful of companies.

That matters because open models accelerate experimentation much faster than closed ecosystems. Once powerful capabilities become portable and affordable, innovation spreads outward rather than upward.

Cheap Intelligence Changes User Behavior

There is another shift happening underneath the technology itself: pricing. Inference costs are collapsing.

When models become dramatically cheaper to run, people stop rationing usage. AI moves from “specialized assistant” to default computing layer. Instead of carefully deciding whether a task warrants AI assistance, users begin to integrate it into ordinary workflows automatically.

That transition is historically important.

The internet changed behavior once bandwidth became cheap enough to feel invisible. AI may follow a similar path once intelligence itself becomes inexpensive enough to disappear into the background.

The Limits Still Matter

At the same time, it is important not to oversimplify what these systems can actually do.

Large context windows still degrade near their limits. Compression introduces tradeoffs. The model remains text-only rather than multimodal. And even the researchers behind these systems admit that some stabilization techniques work better in practice than theory currently explains.

That honesty is refreshing.

AI research often gets presented as inevitable when much of it still depends on empirical discovery and partially understood behavior.

OpenAI Is Entering a Different Phase

What excites me most is not any single benchmark or feature.

It is the broader direction.

Open models are no longer trailing years behind frontier labs. They are beginning to compete closely enough that cost, flexibility, privacy, and deployment control start mattering just as much as raw intelligence.

That changes who gets to build with AI, and once that happens, the center of innovation usually shifts faster than anyone expects.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube