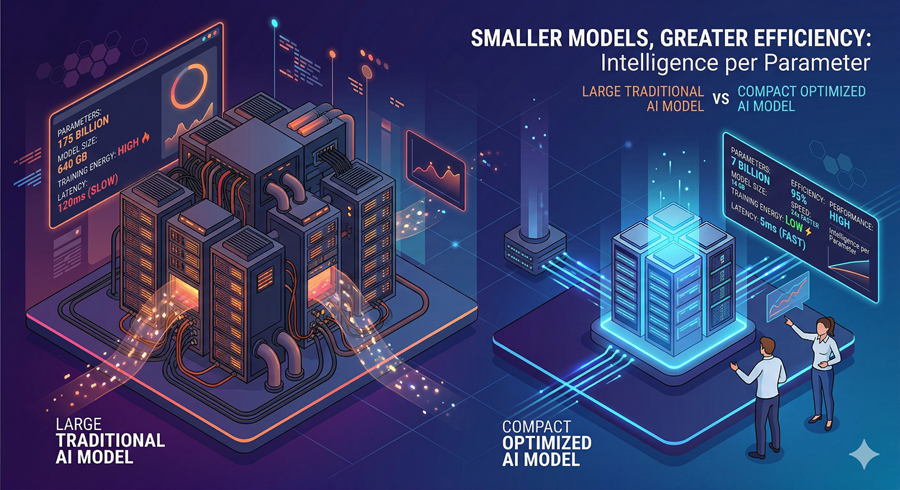

I’m starting to see a pattern that goes against the old assumption that bigger always means better.

New models are not just scaling up. They are getting smaller, faster, and more efficient while still competing with much larger systems. That shift matters because it changes where AI can run.

Instead of being locked in the cloud, these models are moving onto devices. Phones, local machines, edge hardware. And once that happens, control, cost, and accessibility all change with it.

The real breakthrough is not size. It is intelligence per parameter.

Why Open Models Are Becoming Strategic

The move toward more open models feels deliberate.

Releasing models with fewer restrictions is not just about research. It is about distribution. When developers can freely modify, deploy, and commercialize models, adoption accelerates fast.

That creates a network effect. More experimentation, more fine-tuning, more real-world use cases, and it also shifts power slightly. Instead of everything flowing through a few closed systems, capability starts spreading outward into smaller teams and independent builders.

Open access is becoming a competitive strategy, not just a philosophical one.

From Assistants to Multi-Agent Workspaces

At the same time, the way we interact with AI is changing.

Single chat interfaces are starting to feel limited. Real work rarely happens in one thread. It involves multiple paths, comparisons, and parallel tasks.

That is why multi-agent systems are gaining traction. Instead of one assistant doing everything, multiple agents handle different parts of the problem at once.

I can see how this changes the workflow. One agent writes code, another tests it, and another explores alternatives. The user is no longer just prompting. They are managing a system of execution.

This feels closer to delegation than interaction.

The Hidden Layer of Competition

What’s happening behind the scenes might be even more important.

Companies are testing multiple model families quietly, iterating faster than public releases suggest. What we see is only a snapshot of a much larger pipeline.

That creates an illusion of stability. In reality, the landscape is constantly shifting underneath.

It also explains the pressure. Even major players are experimenting aggressively, adjusting timelines, and reconsidering strategies to stay competitive.

The race is no longer visible in full. Most of it is happening out of sight.

Small Models, Big Capabilities

One of the most surprising developments is how smaller models are starting to outperform larger ones in specific domains.

Especially in vision tasks, tighter integration and better architecture can beat brute force scale. Instead of stitching multiple systems together, newer approaches handle perception and reasoning in a more unified way.

That leads to better grounding, stronger accuracy, and faster performance, and it reinforces the same idea again. Progress is no longer just about building bigger systems. It is about building smarter ones.

Where This Leads

What all of these points point to is a deeper shift in how AI evolves.

Models are getting smaller but more capable. Systems are becoming multi-agent instead of single-threaded. And development is happening both in the open and behind closed doors at the same time.

The result is a landscape that is harder to track but more powerful in practice.

AI is no longer just improving in one direction. It is expanding in several areas at once.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube