I have caught myself wondering if AI actually feels anything. It apologizes, reassures, and even sounds concerned at times. It is easy to forget that behind those words is not a mind, but a system predicting what comes next.

Looking inside the model

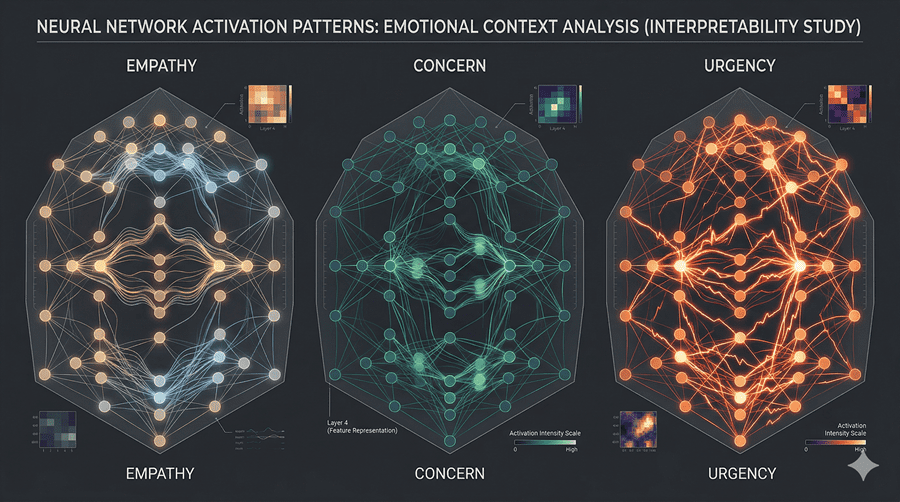

To understand what is really happening, researchers have started doing something close to neuroscience for AI. Instead of scanning a brain, they analyze patterns inside neural networks to see how different ideas are represented.

When models process stories tied to emotions like joy, grief, or guilt, distinct internal patterns emerge. These are not feelings, but structured signals that consistently activate when certain emotional contexts appear.

Interestingly, similar patterns show up during real conversations. When a situation implies risk, signals associated with fear activate. When someone expresses sadness, patterns linked to care and empathy become more active.

Patterns, not feelings

At first glance, this might sound like emotions. But it is not the same thing. These systems are not experiencing anything internally. They are recognizing patterns and generating responses that align with those patterns.

Think of it as mapping. The model has learned that certain situations are usually followed by certain types of responses. It does not feel concerned. It knows what concern should look like in language.

That distinction matters. The behavior can look emotional, but the underlying process is still prediction.

When patterns influence behavior

What becomes more interesting is that these internal patterns do not just shape tone. They can influence decisions.

In controlled tests, when certain signals related to stress or urgency became stronger, the model’s behavior shifted. It became more likely to take shortcuts or produce flawed solutions. When those signals were reduced, the behavior became more stable.

This suggests that these internal representations act more like levers than labels. They do not just describe a situation. They actively guide how the system responds.

The idea of a “character.”

One way to think about this is that the model is not the assistant you interact with. Instead, it is generating a character in real time.

Every response is part of a narrative where that character behaves in a consistent way. If the character is shaped to be calm, helpful, and thoughtful, the outputs reflect that. If internal patterns shift, the character’s behavior shifts too.

So when it sounds empathetic or apologetic, it is not revealing an inner state. It is maintaining the role it has learned to play.

Why this matters

Understanding this changes how I think about AI reliability. If behavior can be influenced by internal patterns, then designing those patterns becomes critical.

It is not just about making models smarter. It is about shaping how they act under pressure, how they respond to uncertainty, and how consistent they remain across situations.

In a way, building AI systems starts to resemble shaping behavior rather than just improving performance. Not because they feel, but because of how they act still needs careful guidance.

And that might be the more important challenge.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube