This week did not feel loud, but it felt important, not because of one breakthrough, but because of four separate signals pointing in the same direction. AI is not just improving. It is becoming more controlled, more integrated, and more consequential.

A model too powerful to release freely

What stood out first was an accidental leak revealing a new class of AI model. More capable than current top-tier systems, it is already being tested but not widely released.

The caution is telling. The concern is not performance, but impact. Systems like this can identify vulnerabilities at a pace that could outstrip defenses. That creates a real-world risk, not just a technical milestone.

So instead of scaling access, the strategy is selective exposure. Let a small group prepare before expanding availability. That alone signals how seriously these capabilities are being taken.

Predicting the human brain

At the same time, another effort is pushing in a very different direction. Instead of generating outputs, the goal is to understand perception itself.

By combining text, video, and audio into a single system, researchers are mapping how the brain responds to real-world stimuli. What makes this notable is not just accuracy, but generalization.

The system can estimate responses for people it has never seen before. In some cases, it captures patterns more reliably than individual measurements. That suggests we are moving toward models that do not just process information, but approximate how humans interpret it.

Agents that actually finish the job

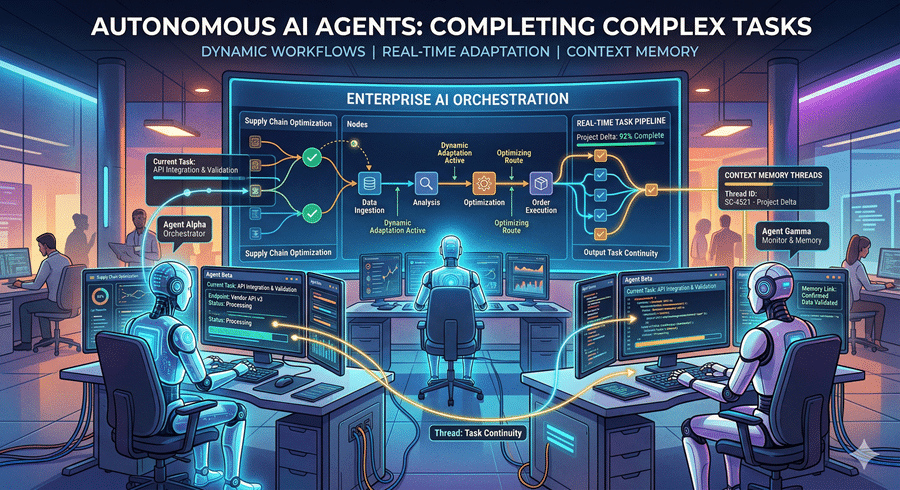

There is also a shift happening in how AI agents are built. For a while, conversational ability defined progress. But real-world usefulness depends on execution.

New approaches focus on continuity. Instead of restarting when tasks change, the system maintains context and adapts mid-process. It can reorder steps, integrate new instructions, and keep moving without losing track.

What makes this more meaningful is the addition of learning loops. Failures are analyzed, patterns are updated, and future attempts improve. This moves agents closer to something that evolves through use rather than staying static.

The hardware shift behind the scenes

A quieter but equally important change supports all of this. Hardware is being redesigned for how AI actually operates.

While GPUs dominate training, running AI systems requires handling sequences of actions efficiently. That is where CPUs are being reimagined, optimized for multi-step workflows rather than single outputs.

There is also a strategic layer. Building internal hardware reduces dependency and stabilizes supply in a constrained environment. Performance matters, but control matters just as much.

A more deliberate phase of AI

What connects all of this is a shift in mindset. More power, but more restraint. More capability, but more focus on real-world consequences.

AI is no longer just about scaling models or chasing benchmarks. It is about managing impact, improving execution, and building systems that can operate reliably under pressure.

This does not feel like another step forward. It feels like a transition into a more deliberate phase.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube