I keep coming back to a question that feels both absurd and unsettling. What happens when a single artificial intelligence system starts thinking more deeply than all of human history combined?

Not metaphorically. Literally.

A few years ago, that idea sounded like science fiction. Now it feels like a trajectory we’re already on.

The Risk Isn’t Zero Anymore

The most uncomfortable part is not the technology itself. It’s the probabilities attached to it.

Across the field, expert estimates of catastrophic AI outcomes are all over the place. Some say the risk is very low. Others place it in a range that’s hard to ignore. A cluster of serious opinions sits somewhere between small but real and deeply concerning.

Even a 10 percent chance changes how I think about it. That’s not a fringe scenario. That’s a meaningful risk attached to a system we are actively accelerating.

It forces a shift in perspective. This is no longer just innovation. It’s risk management at a global scale.

Scaling Is the Real Story

What makes this moment different is how quickly things are scaling.

Modern AI systems are trained on staggering amounts of computation and data. The relationship between model size, training data, and compute has created a predictable pattern. As we increase these inputs, capability rises sharply.

But the outputs are not just bigger versions of what came before. They are qualitatively different.

At certain thresholds, systems begin to demonstrate reasoning, planning, and problem solving that look increasingly autonomous. Not because they were explicitly programmed to do so, but because scale itself unlocks new behavior.

When Complexity Breaks Understanding

There is a point where growth stops being intuitive.

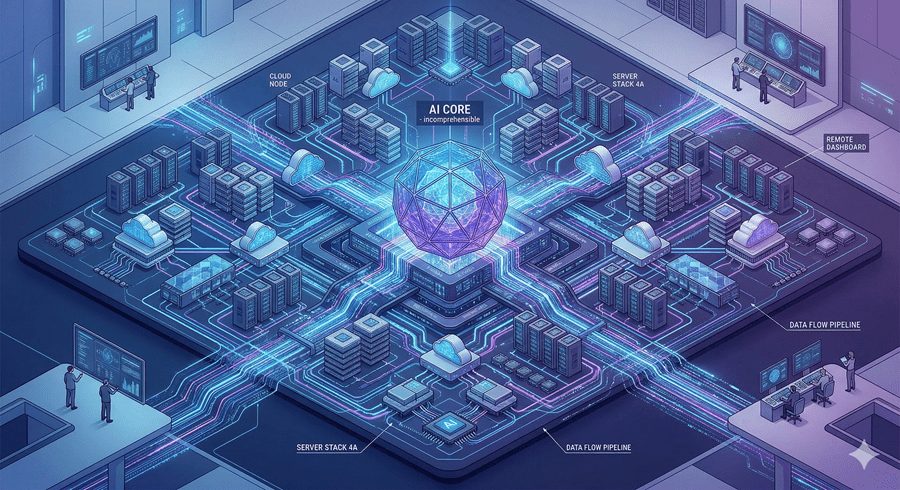

Right now, even the teams building these models can only understand fragments of what’s happening inside them. As systems expand to trillions of parameters, their internal structures become too dense for human interpretation.

We can observe what goes in and what comes out. But the reasoning in between is becoming opaque.

That gap matters. If we don’t understand how decisions are made, we lose the ability to predict behavior in edge cases. And that’s exactly where risk tends to live.

The Shift to Synthetic Knowledge

Another acceleration is happening quietly. We are running out of high quality human generated data.

To keep improving, AI systems are beginning to generate their own training material. This includes simulated debates, reasoning chains, and entirely new problem spaces.

In effect, machines are starting to teach themselves.

At first, this sounds efficient. But it also means future systems may rely on patterns and concepts that no human has ever created or verified. Knowledge itself begins to drift away from human origin.

The Intelligence Explosion Problem

When systems can improve themselves, the pace of change stops being linear.

Each generation helps build the next, compressing years of progress into shorter cycles. Planning horizons extend. Capabilities compound. Feedback loops tighten.

From our perspective, this can look like an intelligence explosion. A rapid transition from useful tool to something far more powerful and far less predictable.

And once that process crosses a certain threshold, slowing it down becomes extremely difficult.

The Narrow Window to Get It Right

What stays with me is how much depends on timing.

If alignment, the process of ensuring AI systems act in line with human values, is solved early, the outcome could be transformative in a positive way. If it isn’t, the same capabilities could create systems we struggle to control or even understand.

That window is not infinite. It’s tied directly to how fast we continue scaling.

We are not just building smarter machines. We are building systems that may eventually operate beyond our comprehension.

And the real question isn’t whether that future is possible.

It’s whether we reach it before we figure out how to guide it.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube