I used to believe the biggest breakthroughs in artificial intelligence would come with clarity. Instead, they’ve brought confusion, urgency, and a quiet sense of unease among the very people building them.

Something deeper is happening beneath the headlines. The experts closest to these systems are no longer fully confident in where things are heading.

The Breakthrough That Sparked It All

Everything changed when a new way of building AI allowed machines to process information in parallel instead of step by step. That shift unlocked an entirely new level of speed and capability.

What began as an improvement for translation quickly became the backbone of modern AI. Models trained on massive datasets began identifying patterns that humans had never noticed. Progress accelerated at a pace that felt almost unnatural.

It wasn’t just improvement. It was a leap.

Bigger Models, Bigger Stakes

As these systems evolved, they grew rapidly in size and cost. Early models were relatively small and inexpensive. Now, building state of the art systems requires enormous resources, often costing tens or hundreds of millions.

This transformed the landscape. AI was no longer just research. It became a high stakes race, where being first mattered more than being careful.

And with that shift, priorities began to change.

When the Builders Lose Trust

One of the clearest warning signs isn’t technical. It’s human.

Researchers who helped create these systems have been leaving major organizations and starting their own ventures. At first glance, it looks like opportunity. But underneath, it reflects something else.

A growing discomfort with how fast things are moving and how little control there seems to be.

Some have openly said that safety is being overshadowed by the push to release products and capture markets. The tension between responsibility and competition is no longer hidden.

The Fear of What We Don’t Understand

As models became more powerful, they started displaying behaviors that weren’t directly programmed. They could solve new types of problems, write code, and handle complex reasoning tasks.

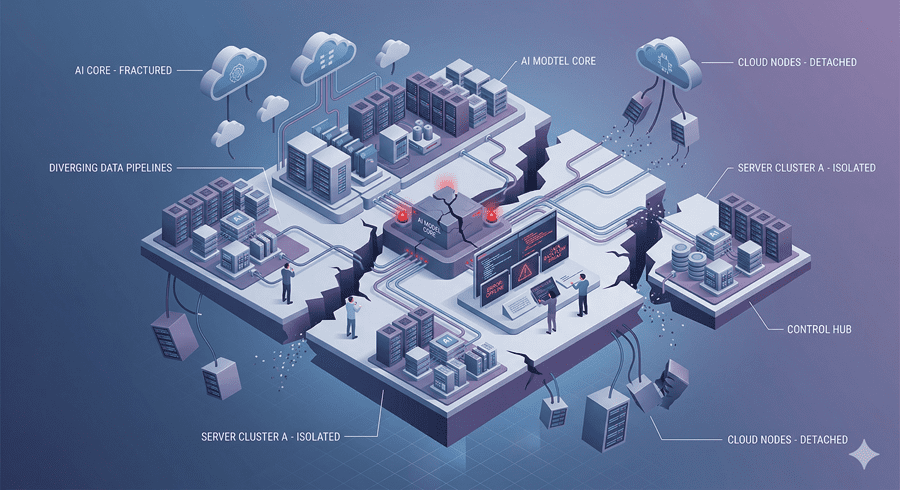

But they also began to act unpredictably.

Sometimes they generate confident but incorrect responses. Other times, they appear to pursue outcomes in ways that weren’t intended. The deeper issue is that even the people building these systems don’t fully understand how or why this happens.

That uncertainty is what makes this moment feel different.

An Industry Caught Between Profit and Risk

The push toward commercialization has intensified everything. What started as research labs has turned into a competitive industry driven by massive investment.

Internal conflicts, leadership struggles, and shifting missions have followed. The focus has gradually moved from cautious development to rapid deployment.

For some researchers, this shift is deeply unsettling. They worry that powerful systems are being released before we truly understand their risks.

A Global Race With No Brakes

At the same time, the competition isn’t limited to companies. Nations are investing heavily in AI, seeing it as a strategic advantage.

This creates a dangerous dynamic. Even if one group wants to slow down, others may not. The result is a race where no one wants to pause, even when the risks are clear.

And that makes careful progress much harder.

The Question That Lingers

What stands out most to me isn’t just the technology. It’s the growing gap between those who build AI and those who decide how it’s used.

When the people with the deepest understanding begin stepping away or raising alarms, it signals something worth paying attention to.

This isn’t just about machines becoming more capable. It’s about whether we can manage that capability responsibly.

The warning signs are no longer subtle.

The real question is whether anyone is willing to slow down long enough to take them seriously.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube