For decades, we’ve been told two very different stories about artificial intelligence. One describes it as a super-intelligent force that may one day outsmart humans. The other dismisses it as nothing more than a glorified calculator. But the truth lies somewhere in between.

AI is neither magic nor truly “thinking” in the human sense.

So how does a system made of code learn to drive a car, write poetry, or diagnose diseases? The answer is surprisingly simple. At its core, AI is based on pattern recognition something humans discovered long ago and eventually automated.

From Rules to Learning

In traditional programming, computers followed strict rules. For example, to identify a cat, a programmer might write: if it has whiskers, fur, and pointed ears, then it’s a cat. But this approach quickly fails when faced with real-world variation, like hairless cats or unusual features.

It became clear that writing rules for every possibility was impossible.

This led to machine learning, where instead of giving rules, we give examples. Think of teaching a child: you show many pictures of cats and say “cat,” then show dogs and say “not cat.” Over time, the child recognizes patterns.

AI works the same way. It studies millions of examples and learns patterns on its own often patterns too complex for humans to describe.

Neural Networks: Layers of Understanding

AI uses something called a neural network, which can be thought of as layers of filters.

For example, when recognizing a handwritten number, the first layer looks for simple features like lines and curves. The next layer combines these into shapes, and the final layer identifies the number.

This process, called deep learning, is not magic. It’s simply multiple layers of logic working together, gradually building understanding from basic inputs.

Learning Through Mistakes

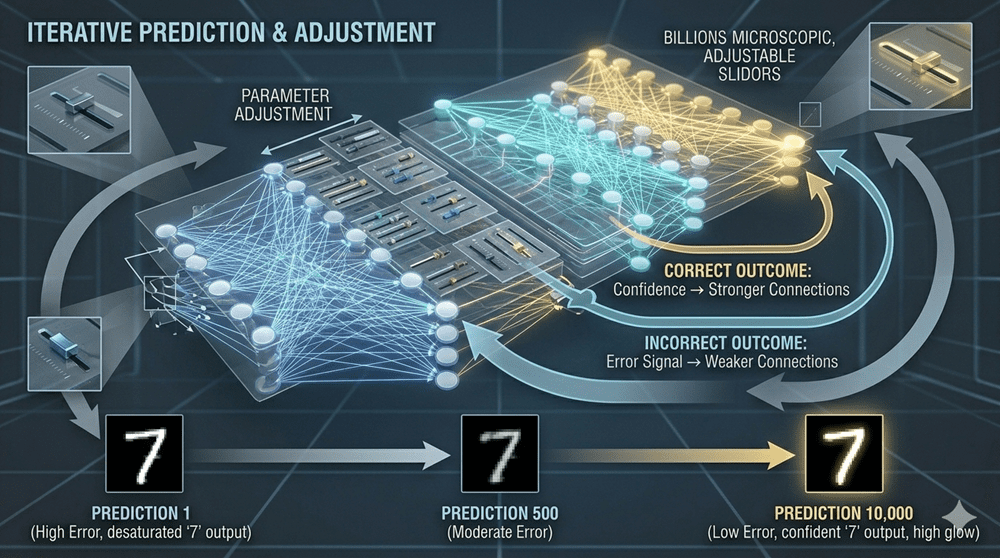

But how does AI improve?

It learns through feedback, similar to a “hot or cold” game. When it makes a wrong guess, the system adjusts internal settings called weights. Imagine billions of tiny knobs being slightly adjusted each time the AI is wrong.

After millions of corrections, the system becomes highly accurate. It doesn’t truly understand a “cat” it just becomes extremely good at recognizing patterns associated with one.

Generative AI: Predicting, Not Thinking

Modern tools like ChatGPT use a slightly different approach.

They act like advanced autocomplete systems. Given a sentence like “The quick brown fox jumps over the,” they predict the most likely next word based on patterns in massive datasets.

This means AI isn’t stating facts or expressing thoughts. It’s predicting what humans are most likely to say next.

A Tool, Not a Mind

AI may seem mysterious, but it is built on logic, repetition, and human-generated data. It doesn’t possess understanding or wisdom it reflects patterns learned from us.

In the end, AI is not an alien intelligence. It is a powerful tool, made complex simply by repeating simple processes billions of times.