For years, artificial intelligence has amazed us with chatbots, image generators, and voice assistants. These tools can write essays, generate artwork, and answer complex questions in seconds. Yet they all share a major limitation.

They do not truly understand the world around them.

Most AI systems operate within a narrow digital environment. They process text, images, or audio separately and respond to prompts without actually observing or interacting with real-world situations. However, a new development suggests that this limitation may finally be starting to change.

A new class of AI built on models like Gemini from Google is pushing artificial intelligence beyond simple responses and toward real-world awareness.

The implications could be significant.

From Reactive AI to Continuous Awareness

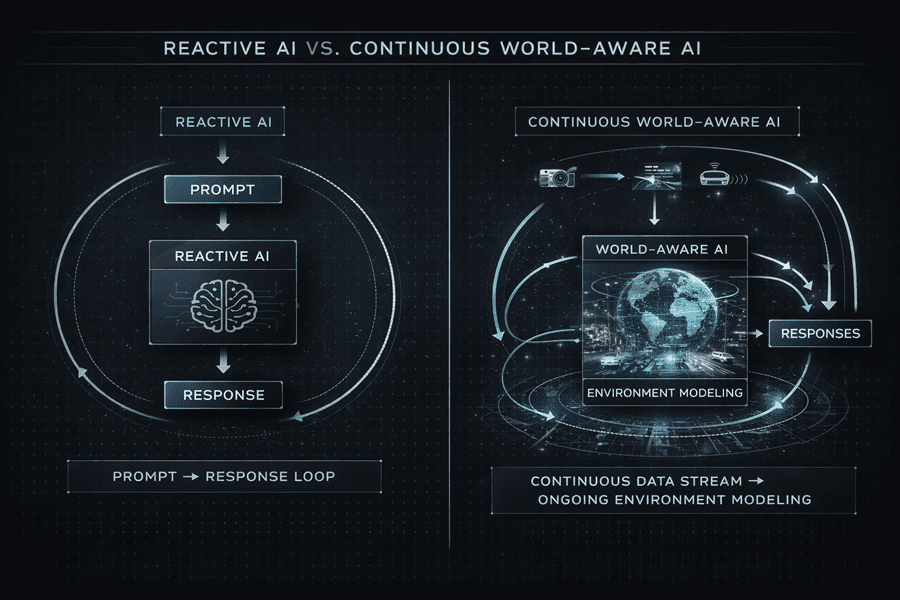

Traditional AI systems behave somewhat like advanced search engines. They wait for a prompt, analyze the request, and generate an answer.

World-aware AI changes this behavior completely.

Instead of reacting only to prompts, the system can continuously observe its surroundings through cameras, microphones, and other inputs. It can track objects, understand motion, and maintain awareness of events over time.

This allows the AI to create an internal model of its environment. Rather than simply identifying objects, it can understand relationships between them and detect how situations change over time.

Why Memory Changes Everything

One of the key elements behind this shift is persistent memory.

Most current AI tools forget information after a conversation ends. Each interaction effectively starts from zero. World-aware systems, however, can remember earlier observations and monitor how things evolve.

For example, the AI might recognize where objects are placed in a room, later notice when something moves, and explain what has changed.

This kind of memory creates continuity and allows artificial intelligence to move beyond simple reactions toward more adaptive behavior.

Human intelligence works similarly, relying on memory and observation to understand the world.

The Power of Multimodal Reasoning

Another important advancement is multimodal reasoning.

Modern AI models already handle text and images effectively. But world-aware systems combine vision, sound, and language into a unified stream of understanding.

For instance, a person might point to an object while asking a question aloud. The AI can interpret the gesture visually, identify the object, and process the spoken request at the same time.

This type of interaction feels far more natural than communicating with systems that rely only on text.

A Turning Point for AI

The potential uses for this technology are wide-ranging. In education, AI tutors could observe students solving problems and offer guidance. In healthcare, intelligent assistants might monitor patients and alert doctors to changes. Robotics systems could also operate more safely by understanding their environment in real time.

For decades, AI mostly existed inside computers, analyzing data and generating outputs. Systems based on models like Gemini are beginning to bridge the gap between digital intelligence and the physical world.

The era of world-aware AI may just be beginning.