Google has just introduced a major wave of AI updates, and this is far bigger than a normal product refresh. The company is clearly moving toward a future where AI is not just answering questions, but actively building software, managing workflows, and operating inside real productivity systems. The biggest announcements include a major upgrade to Google AI Studio, a rumored dedicated Gemini app for MacOS, and a new way for AI agents to directly interact with Google Colab.

Together, these updates reveal Google’s larger strategy. The company wants AI to become deeply integrated into how apps are created, how devices are used, and how people work every day. Instead of AI acting like a separate chatbot in a browser tab, Google is pushing toward systems that can participate directly in real tasks and workflows.

AI Studio Now Builds More Advanced Apps

One of the biggest changes is happening inside Google AI Studio. Google upgraded its Vibe coding experience with a new coding agent called Anti-Gravity. The goal is simple but ambitious. Users can describe the kind of app they want, and the AI attempts to generate something much closer to a real working application instead of a rough prototype.

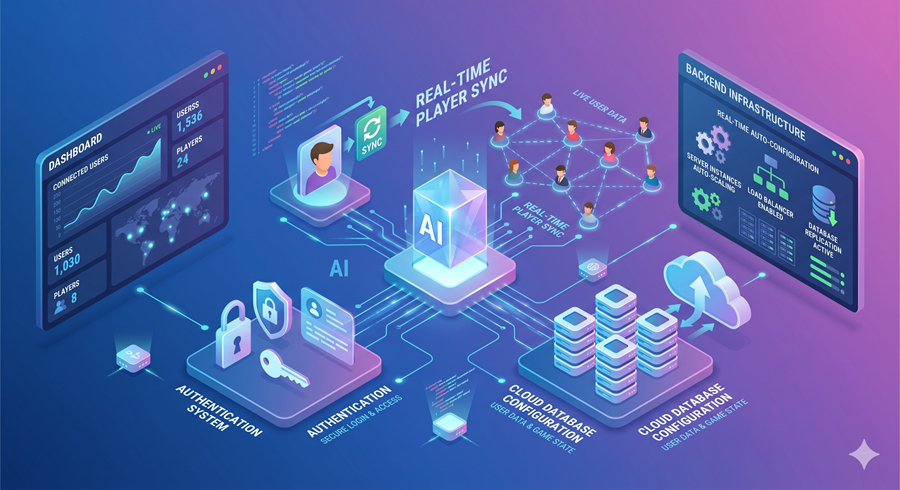

What makes this important is the complexity of the apps AI Studio can now handle. According to Google, the platform is capable of building real-time multiplayer experiences. That is a huge jump compared to most AI app builders available today. Multiplayer systems require live syncing, shared databases, user authentication, and backend coordination. These are areas where many AI-generated apps usually fail.

Google is trying to solve that by deeply integrating Firebase services into the workflow. The AI agent can recognize when an app needs cloud storage or user authentication and automatically configure Firestore databases and Google sign-in systems after user approval. That means the AI is no longer only generating interface code. It is handling backend infrastructure as well.

The platform also supports modern frameworks like React, Angular, and Next.js. This matters because Next.js has become one of the most widely used frameworks for professional web applications. Google clearly wants developers to see AI Studio as something capable of building production-style apps instead of experimental demos.

Gemini Could Become A Native MacOS AI Assistant

At the same time, reports suggest Google is preparing a dedicated Gemini app for MacOS. Until now, Gemini has mostly existed inside browser tabs. A native desktop app would change how users interact with Google’s AI entirely.

Once AI assistants become integrated directly into operating systems, users begin expecting deeper functionality. Instead of simply answering prompts, AI can potentially access files, organize tasks, search documents, and interact with system-level workflows. That moves Gemini into direct competition with tools like ChatGPT and Claude as permanent desktop assistants.

The bigger strategic story is Google’s growing partnership with Apple. Recent reports suggest Apple may rely on Gemini models and Google infrastructure to support parts of its future AI ecosystem, including Siri improvements and broader Apple Intelligence features. If Gemini gains deeper integration with Apple’s ecosystem, Google could become a major invisible layer underneath millions of Apple devices.

Google Colab Gets AI Agent Support

Another major announcement involves Google Colab. Google released a new Collab MCP server that allows AI agents to directly interact with Colab notebooks instead of simply generating code for users to copy manually.

This changes the workflow completely. Previously, users had to ask AI for code, paste it into Colab, run it themselves, and troubleshoot errors manually. Now AI agents can create notebooks, execute Python code, install packages, analyze datasets, and continue fixing issues automatically.

The system is built around MCP, or Model Context Protocol, which helps AI interact with external tools more naturally. AI agents can now use Colab almost like a real software environment instead of treating it as a disconnected platform.

This matters because it pushes AI beyond text generation and into operational problem-solving. Developers can now ask an AI to analyze CSV files, generate plots, or build machine learning workflows while the AI handles much of the execution process directly.

Google’s Bigger AI Strategy Is Becoming Clear

All of these announcements point toward the same trend. Google is trying to move AI from being a chatbot to becoming a deeply integrated operating layer across software, devices, and workflows. AI Studio focuses on app creation, Gemini aims to become a system-level assistant, and Colab integration turns AI into a direct participant in technical work.

The company is no longer treating AI as a separate feature. It is positioning AI as infrastructure that sits underneath everything else. That shift could completely change how people build software, interact with computers, and manage everyday tasks in the years ahead.