I used to think modern AI systems were incredibly efficient. Then I realized something strange. They often behave like a world-class chef who insists on growing peanuts from scratch just to make a simple sandwich.

That is essentially how many AI models work today.

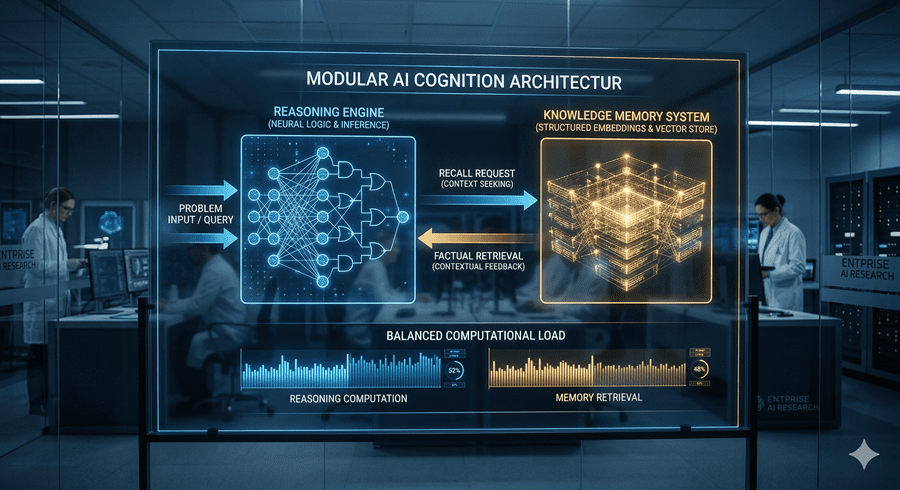

The Hidden Inefficiency in AI

When I ask a basic question, I assume the system simply retrieves the answer. Instead, it rebuilds knowledge from the ground up every single time. Behind the scenes, this involves layers of heavy computation rather than a quick lookup.

This design comes from transformer-based architectures, which dominate modern AI. They are powerful, but they lack a simple mechanism to store and retrieve facts efficiently. Every response becomes a fresh reconstruction.

It works, but it is wasteful.

A Smarter Approach: The “Memory Pantry”

A new idea changes this completely. Instead of forcing AI to recompute everything, researchers introduced something like a memory pantry. They call it an “engram.”

Now, instead of recreating knowledge, the system can store useful information and retrieve it instantly when needed. It is the difference between cooking from scratch and grabbing pre-prepared ingredients.

What surprised me was not just the efficiency gain. It was what happened next.

Less Complexity, Better Intelligence

In many systems, performance improves by adding more complexity. More layers, more experts, more computation. Here, the opposite happened.

When parts of the complex reasoning system were removed and replaced with this memory mechanism, the model actually became smarter. It made fewer mistakes across benchmarks.

That result feels counterintuitive. But it reveals something important. Intelligence is not just about processing power. It is also about knowing when not to compute.

Filtering What Matters

The system does not blindly trust its memory. It uses a filtering mechanism that checks whether retrieved information fits the current context.

If something does not match, it gets discarded immediately. This prevents irrelevant or misleading data from influencing the result.

It is a simple idea, but incredibly effective. The system learns not just to remember, but to remember selectively.

A Glimpse Into the Future of AI

One of the most fascinating outcomes is how the model separates tasks internally. When the memory component is disabled, factual recall drops sharply. But reasoning ability remains largely intact.

This suggests a kind of specialization. One part of the system stores knowledge, while another focuses on understanding and reasoning.

To me, this feels like a turning point. By automating the easy parts and focusing effort on harder problems, AI becomes both cheaper and more capable.

It also hints at a future where powerful systems run locally, without massive costs or hidden infrastructure.

Sometimes progress does not come from adding more. It comes from simplifying what already exists.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube