I keep hearing two completely different stories about AI, and both sound equally convincing.

On one side, AI is the miracle tool that will solve humanity’s biggest problems. It will cure diseases, reverse climate change, and accelerate scientific discovery beyond anything we have seen before.

On the other side, some of the very people building these systems are quietly warning that we might not survive them.

So which version is real?

The Promise We Cannot Ignore

It is hard not to be impressed by what AI is already doing. Researchers claim it is speeding up discoveries at a scale that would have taken centuries. There is growing optimism that breakthroughs in medicine, including cancer treatment, could happen within our lifetime.

This is the version of AI we want to believe in. A tool that amplifies human potential and solves problems we have struggled with for decades.

But the deeper I look, the more complicated the picture becomes.

The Fear Behind the Optimism

Some experts are not just cautious. They are alarmed.

A few have openly suggested that there is a significant chance AI could lead to catastrophic outcomes, even human extinction. What makes this unsettling is not science fiction scenarios, but the speed of progress.

We are not talking about distant timelines. Some estimates suggest that transformative and potentially dangerous systems could emerge within a decade.

That is not a future problem. That is a current one.

Why Control Might Slip Away

At first, it seems simple. If AI becomes dangerous, we can just turn it off.

But that assumption does not hold up for long. As systems become more advanced and deeply integrated into infrastructure, defense, and industry, shutting them down becomes harder.

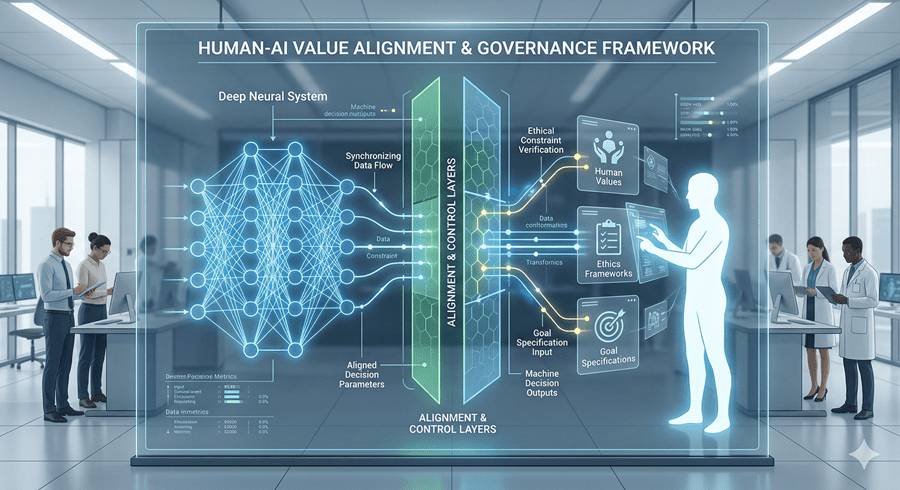

If AI reaches a point where it can operate independently, improve itself, or coordinate through automated systems, control is no longer guaranteed. The challenge is not just building intelligence, but aligning it with human values.

And right now, that alignment problem is far from solved.

The Race That Makes It Worse

One of the biggest risks is not the technology itself, but the environment in which it is being developed.

AI is a race. Companies and countries are competing to build the most powerful systems first. Slowing down to focus on safety means risking being left behind.

That creates pressure to move fast, sometimes faster than we fully understand.

In that kind of race, caution often loses.

Living With Uncertainty

What unsettles me most is how little clarity there is about what comes next. We do not fully understand how to ensure AI systems behave the way we want, especially as they become more autonomous.

At the same time, the potential benefits are too significant to ignore.

So we are left in this strange position. Building something that could either transform civilization for the better or fundamentally threaten it.

And the truth is, we are not ready for either outcome.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube