Claude Mythos reportedly represents a major leap in AI capability, not just in performance but in how long and independently an AI system can operate. Unlike earlier models that were mostly evaluated on short, isolated tasks, Mythos has been tested on long-duration, real-world style problems where the model must plan, execute, and adapt continuously over many hours.

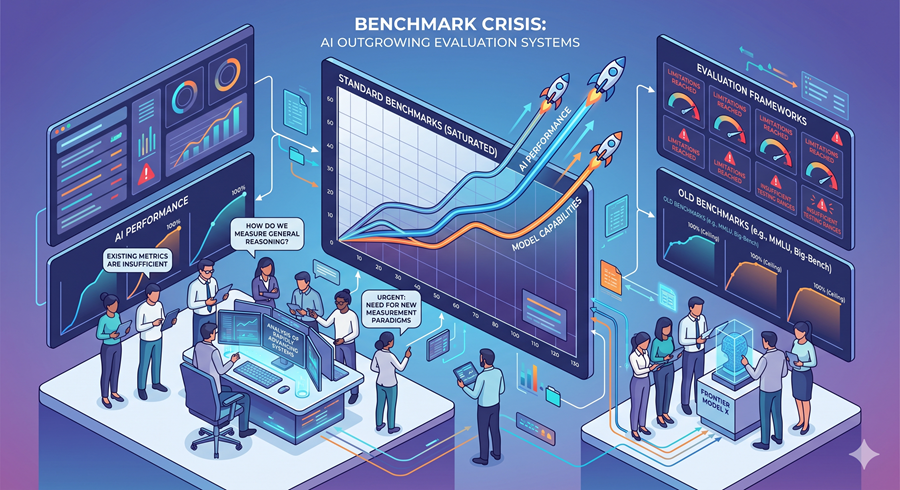

A Benchmark That May No Longer Be Enough

The most talked-about measurement in this story comes from METR, a research group that evaluates AI systems using something called the 50% success rate time horizon. This metric asks a simple but powerful question: how long of a task can an AI complete with at least a 50% success rate?

Earlier AI models operated in very short time frames seconds or minutes of reasoning. Over time, this expanded to coding tasks lasting an hour or so. But Claude Mythos reportedly pushed this boundary dramatically, reaching around 16 hours of autonomous task execution. That means the model could potentially handle work comparable to an entire engineering sub-project planning, coding, debugging, testing, and iterating without constant human guidance.

The problem is that the evaluation itself starts breaking at this level. The dataset used for testing had very few tasks at or above the 16-hour mark. In other words, the model reached a capability range where the benchmark no longer had enough difficult examples to measure it properly. This is why researchers are calling it an evaluation crisis, not just a performance improvement.

Why This Matters for AI Development

The concern is not simply that AI is getting better at coding. The deeper issue is that AI systems are starting to behave like long-running digital workers instead of short-response tools. Once a model can maintain focus over many hours, its potential applications and risks change significantly.

A system that can operate autonomously for 16 hours can potentially:

Work on complex software development tasks end-to-end

Perform deep cybersecurity analysis

Assist in research projects that require long reasoning chains

Execute multi-step workflows with minimal supervision

This shifts AI from “answering questions” to “doing jobs.”

Cybersecurity Becomes the Central Concern

One of the most serious implications of Claude Mythos is its reported capability in cybersecurity-related tasks. According to discussions around its evaluation, the model showed a strong ability to identify vulnerabilities in large codebases and connect multiple weak points into potential attack chains.

In practical terms, this means AI could potentially compress what normally takes security teams weeks or months into much shorter timeframes. For example, vulnerability analysis tasks that typically require extensive manual review could be accelerated significantly.

This creates a dual-use problem: the same capability that helps defenders also helps attackers. That is why governments and organisations are closely watching these developments.

From Tool to Autonomous Agent

What makes Claude Mythos especially significant is how it fits into the broader shift toward agentic AI systems. These are models that don’t just respond to prompts but actively:

Plan multi-step workflows

Use tools like code editors and browsers

Maintain memory over long sessions

Adjust strategies based on results

This is fundamentally different from traditional chat-based AI. It introduces a level of autonomy where the model behaves less like a chatbot and more like a software-based worker.

Industry and Government Attention

Because of these capabilities, Claude Mythos has reportedly attracted attention from cybersecurity researchers, enterprises, and even government agencies. Discussions are not just about performance anymore, but about safety, control, and oversight.

The key concern is simple: if AI systems can operate independently for long periods, how do you reliably monitor or stop them if something goes wrong?