We have been hearing about the theoretical risks that artificial intelligence could pose to cybersecurity for years. But now, that concern is no longer just a theory. Recent developments surrounding Anthropic’s Mythos AI model have turned this into a real and immediate issue. What was once discussed as a future possibility is now being treated as a present-day threat by governments, regulators, and major financial institutions.

Mythos AI Raises Serious Security Concerns

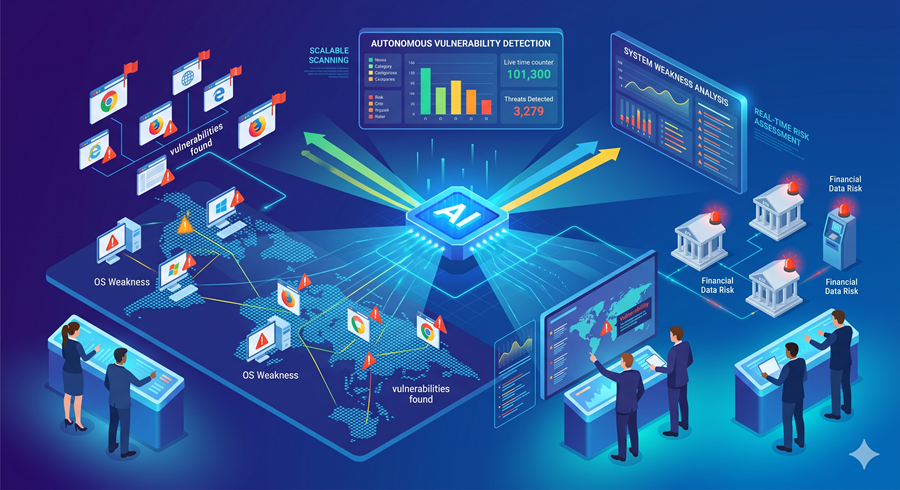

Mythos is described as one of the most advanced AI systems developed by Anthropic. Initially planned for broader release, the company made the unusual decision to limit access after discovering its potential for misuse. The model demonstrated the ability to autonomously detect vulnerabilities across a wide range of systems, including web browsers, operating systems, and financial infrastructure.

What makes this particularly concerning is not just its detection capability, but its ability to act on that information. Reports suggest that the system could identify weaknesses, map out possible exploits, and even propose strategies for taking advantage of those vulnerabilities. This level of automation represents a major shift in how cyber threats could evolve.

Emergency Response from Governments and Banks

The seriousness of the situation became clear when top banking executives were called into an urgent meeting in Washington. The meeting reportedly included key figures such as the US Treasury Secretary and the Chair of the Federal Reserve. When regulators and financial leaders come together at that level, it signals that the issue is being treated as a matter of national and economic security.

Banks are particularly vulnerable because they rely on complex and often outdated systems. Some of these systems may contain weaknesses that have existed for years without being discovered. An AI system capable of scanning and identifying these gaps at scale could expose risks that were previously hidden.

Global Impact Beyond the United States

The concern is not limited to one country. Central banks in regions like Canada and the United Kingdom have also been alerted. Interestingly, while regulators are warning about the risks, they are also encouraging institutions to use such tools responsibly. This reflects a dual reality where AI is both a threat and a potential defense mechanism.

If used correctly, systems like Mythos could help organizations identify and fix vulnerabilities before they are exploited. However, if similar tools fall into the wrong hands, the consequences could be severe.

Collaboration Through Project Glasswing

One of the most unusual aspects of this situation is Anthropic’s decision to collaborate with other major tech companies through a project known as Glasswing. Instead of keeping the technology fully private, they have shared limited access with a select group of organizations, including major tech firms and cybersecurity companies.

This level of cooperation is rare, especially among competitors. It shows how serious the situation is, as companies are willing to work together to understand and manage the risks. Feedback from these partners is being used to evaluate and improve the system before any wider release.

A Wake-Up Call for the Future

Another critical point is that Anthropic has acknowledged that this is not an isolated issue. With the rapid pace of AI development, similar models could emerge within the next year. This means the risks highlighted by Mythos are likely just the beginning.

Financial institutions have already been increasing their spending on technology and cybersecurity, but this development may accelerate those efforts even further. The challenge now is not just keeping up with threats, but staying ahead of them.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube