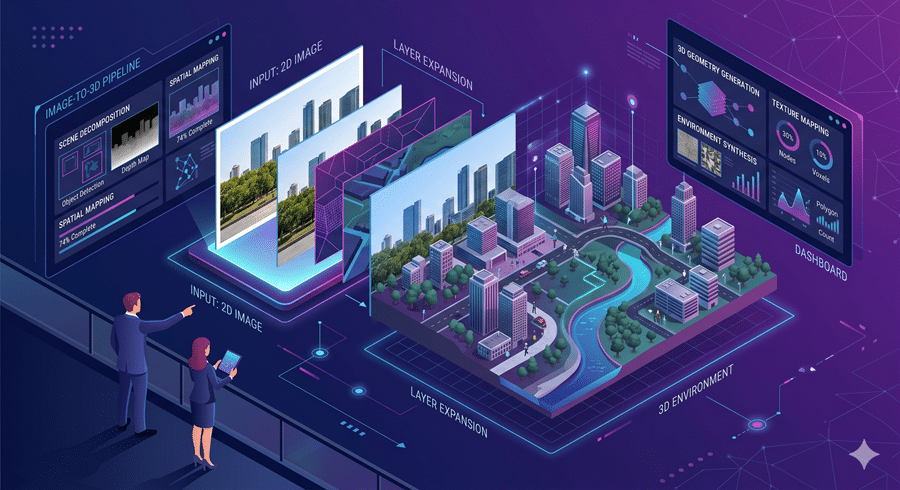

Now and then, a research release feels less like a technical upgrade and more like a glimpse of where computing is headed. This is one of those moments. A new system can take a single image and generate a 3D world that I can explore. Not just a stitched panorama. Not just a fake camera pan. A navigable, interactive environment built from one frame. That is the breakthrough.

The appeal is obvious. A single photo can become a memory I can revisit, a training ground for robots, or a simulated environment for autonomous systems. It turns static imagery into something spatial, explorable, and useful. That changes what one image can be.

The core idea is not entirely new. We have seen systems generate interactive worlds from images before. The problem was consistency. Earlier models could create convincing worlds for a moment, but they struggled with permanence. Look at something, turn away, then look back and the world might change. Objects drifted. Layouts shifted. The illusion broke. That made these systems impressive, but unreliable.

The core issue was memory. These models could generate plausible frames, but they did not reliably preserve what had already been seen. They were creating scenes, not maintaining worlds. That distinction matters more than it sounds.

What makes this system different is not just that it generates better visuals. It remembers structure. Instead of trying to memorize an entire scene as raw imagery, it stores a lightweight 3D scaffold of what was already observed. Not the full world, just enough geometry to preserve consistency. That means when I look away and return, the model is not inventing the scene again. It is reconstructing it from remembered spatial structure.

This is the real leap. The model is no longer guessing what should be there. It is referencing what it already knows was there. That is what makes the world feel stable.

This matters far beyond visual demos. Stable world generation is foundational for simulation. It matters for robotics, autonomous systems, synthetic training environments, and interactive digital spaces that need to remain coherent over time. A robot cannot learn reliably inside a world that forgets itself. A simulation cannot train useful behavior if its logic collapses the moment perspective changes. Long-term consistency is what turns generative novelty into usable infrastructure.

The system still has limits. Dynamic scenes remain difficult. Lighting inconsistencies can carry through from training data. Reconstructed geometry still produces occasional artifacts. But those are solvable problems. The important shift already happened. We are moving from AI that generates convincing images to AI that can preserve coherent worlds. That is a much bigger step.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube