Most people looked at Opus 4.7 and asked the wrong question.

They asked whether it is smarter than everything else. That is not what matters. The real question is what Anthropic optimized it for, and the answer explains nearly all of the confusion around this release.

Opus 4.7 is not designed to be better at everything. It is designed to be better at work.

That sounds subtle, but it changes how the entire release should be interpreted.

This model was built for enterprise agents, not general users

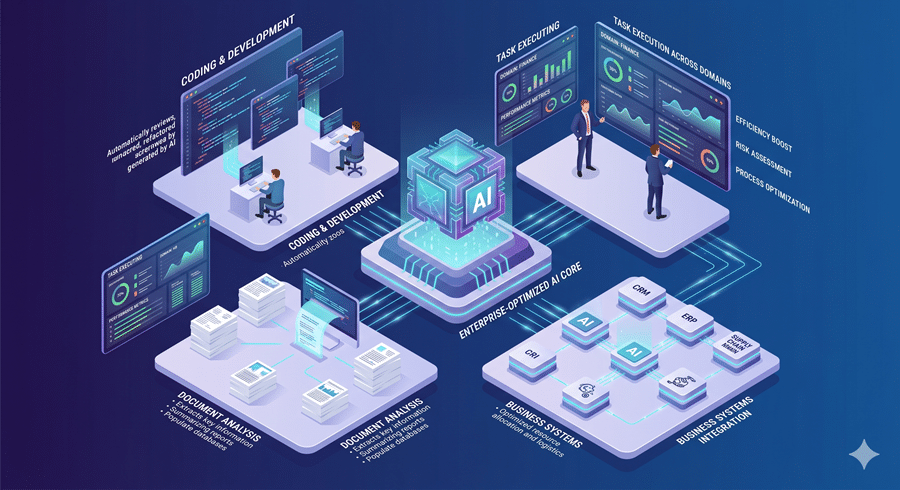

The strongest gains in Opus 4.7 are not broad intelligence gains. They are highly specific improvements in areas that matter to enterprise automation.

It is better at coding, stronger at tool use, more reliable with visual interfaces, and significantly better at reasoning across documents. It also holds context longer across messy, multi-step tasks without losing coherence. That is not a consumer optimization.

That is a model being tuned for software agents, internal workflows, and enterprise execution.

Anthropic is not optimizing for casual prompting anymore. It is optimizing for systems that can read interfaces, work through long tasks, reason across files, and complete economically useful work.

That is the real shift.

The benchmark story is being misunderstood

Most public benchmark discussion still treats AI like a generalized intelligence contest. Anthropic clearly is not.

The benchmarks that matter most here are the ones tied to economic output: coding, legal reasoning, finance, document analysis, and long-horizon task execution.

That is why Opus 4.7 looks much stronger on enterprise evaluations and much less impressive on more general reasoning or consumer-facing tasks.

This is not an inconsistency. It is prioritization. Anthropic is optimizing for business value, not broad likability.

Why do some users think it feels worse

This is where the backlash starts to make sense.

If your use case is enterprise automation, Opus 4.7 is likely a real improvement. If your use case is broad reasoning, casual exploration, or general-purpose prompting, it may feel less impressive and, in some cases, worse.

That is not necessarily because the model is weaker overall. It is because capability is becoming more uneven.

AI is improving in a jagged way. It gets dramatically better in some domains while remaining flat or even weaker in others. Opus 4.7 is a clear example of that tradeoff.

The real issue is not just performance. It is accessible

The bigger problem is that most users are not experiencing the full version of what Opus 4.7 could be.

Anthropic appears to be managing compute aggressively, limiting reasoning depth, tightening access, and effectively pushing the best performance toward enterprise customers who can pay for sustained usage.

That makes Opus 4.7 feel worse for many users, even if the underlying model is stronger in targeted domains.

So the real story is not that Opus 4.7 is bad.

It is that Opus 4.7 is highly optimized, uneven by design, and increasingly built for enterprise customers first.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube