I used to think the biggest challenge facing AI was intelligence. Now I realize it is energy.

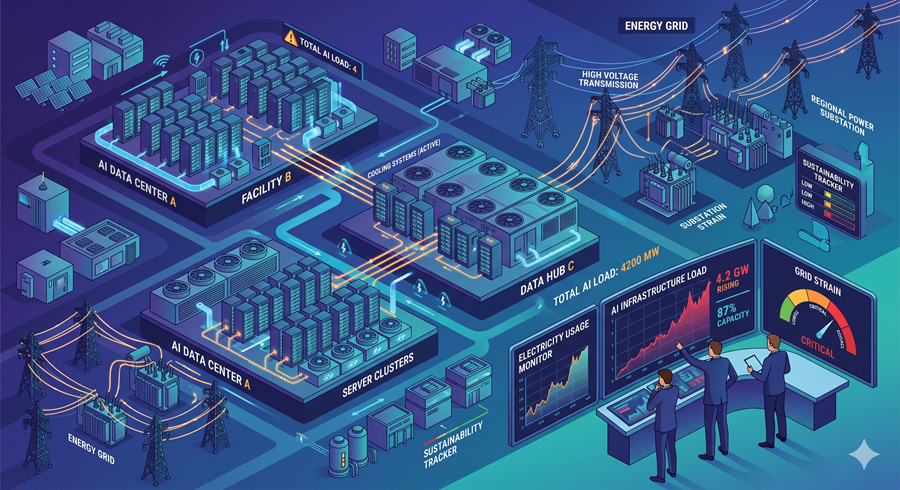

Every time I interact with an AI system, there is an invisible cost behind the scenes. Massive data centers process those requests, consuming enormous amounts of electricity. At scale, this is no small issue. AI systems are now responsible for a significant share of energy usage, and that number is rising faster than most people expected.

The Hidden Cost of Every AI Interaction

What surprised me most is how quickly AI infrastructure has expanded. Tech companies are pouring hundreds of billions into building data centers, and these facilities are not small. Some consume as much power as entire cities.

That creates a strange imbalance. On one hand, AI is becoming more powerful and more integrated into everyday workflows. On the other hand, the energy required to sustain that growth is pushing real-world limits. In some places, power grids are already feeling the pressure.

It raises a simple question I keep coming back to: how far can this scale before it becomes unsustainable?

The Breakthrough That Changes the Equation

What caught my attention recently is a shift in how AI systems are being designed. Instead of relying purely on brute computational force, researchers are exploring more efficient ways to process information.

One of the most promising approaches combines pattern recognition with structured reasoning. Instead of throwing massive compute at every problem, the system breaks tasks into smaller, logical steps, more like how humans approach complex decisions.

The result is striking. Early findings suggest that energy usage can drop dramatically while accuracy improves. That flips the traditional assumption that better performance always requires more power.

From Bigger Models to Smarter Systems

This feels like a turning point. For years, progress in AI has been driven by scale. Bigger models, more data, more compute. But that approach comes with increasing costs, both financially and environmentally.

Now, the focus is starting to shift. Efficiency is becoming just as important as raw capability. Advances in memory optimization and compact hardware are reinforcing this direction, enabling the deployment of powerful AI systems on much smaller devices.

This is not just a technical improvement. It changes how and where AI can exist.

What This Means for Businesses Like Mine

When I think about the implications, the biggest shift is accessibility. If the cost of running AI drops significantly, the barrier to entry collapses.

Tasks that once required expensive cloud infrastructure could run locally. Automation becomes cheaper, faster, and more scalable. Instead of limiting AI to large organizations, it becomes available to smaller teams and independent operators.

That opens the door to something bigger. It is not just about saving money. It is about enabling entirely new ways of working.

Why Lower Costs Create New Opportunities

What I find most interesting is how cost reductions unlock new behavior. When something becomes cheaper by an order of magnitude, people stop optimizing for scarcity and start experimenting freely.

This means more personalized experiences, deeper data analysis, and broader automation across everyday workflows. Things that once felt excessive or impractical suddenly become standard.

The real impact is not just efficiency. It is an expansion.

The Shift That Changes Everything

Looking at the bigger picture, I see a transition from power-driven AI to efficiency-driven AI. That shift matters because it removes one of the biggest constraints on growth.

Instead of hitting an energy ceiling, the industry is finding ways to do more with less. And when that happens, adoption accelerates.

What felt like a looming limitation is starting to look like an opportunity. The systems are getting smarter, cheaper, and more practical at the same time.

That combination is what turns a powerful technology into a universal one.