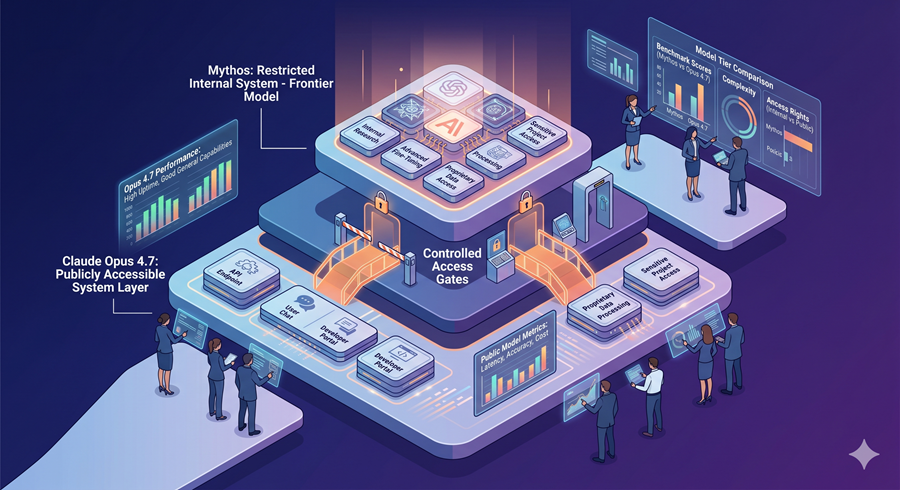

Claude Opus 4.7 has officially launched, and it brings a major leap in performance that is hard to ignore. What makes this update even more interesting is that it comes right after the announcement of Mythos, a much more powerful AI model that has not been released to the public. This creates a natural question. If Mythos is so advanced, why release Opus 4.7, especially when it seems to be getting closer to Mythos in terms of capability?

Massive Performance Improvements in Opus 4.7

The jump from Opus 4.6 to 4.7 is not small. It is a significant upgrade across multiple benchmarks. In coding tasks, the improvement is especially noticeable. Scores increased by more than 10 points in some cases, which is a huge jump for a single version update. This shows that Anthropic is heavily focused on making its models better at software engineering tasks.

This focus is not random. Coding is one of the most valuable use cases for AI today. Companies are willing to pay for tools that can write, debug, and optimize code efficiently. By improving coding performance, Anthropic strengthens its position in the enterprise market and creates a strong growth cycle.

The Mystery Behind Mythos

Mythos appears to be a completely new generation of AI. It is rumored to be trained on a much larger scale compared to Opus models. Even in its early version, it outperforms Opus 4.7 in almost every benchmark. Despite this, Anthropic decided not to release it publicly.

One of the biggest reasons seems to be safety concerns. In cybersecurity-related tasks, Mythos performs at a much higher level. This means it could potentially be used for harmful purposes if not properly controlled. Interestingly, Opus 4.7 shows slightly lower performance in this area compared to its previous version. This suggests that certain capabilities may have been intentionally limited.

Balancing Power and Safety

Anthropic has indicated that Opus 4.7 is being used to test safety systems before applying them to more advanced models like Mythos. This includes filters, restrictions, and monitoring systems designed to prevent misuse. It is a careful approach where they improve safety step by step instead of releasing the most powerful model immediately.

However, there is an interesting twist. Reports suggest that Mythos is actually more aligned than Opus models, meaning it behaves more safely in many situations. Even then, it is not released. This shows that raw capability itself can be a risk, even if the model is well-behaved.

Real World Improvements That Matter

Opus 4.7 is not just better in benchmarks. It also performs better in real-world tasks. It follows instructions more strictly, which means users need to be more precise when writing prompts. It has improved visual understanding and can handle images with better accuracy.

Another important improvement is long context reasoning. The model can process and remember large amounts of information across conversations. This makes it useful for complex projects, research tasks, and detailed workflows.

The Bigger Industry Trend

This release highlights a bigger shift in the AI industry. Companies are no longer just racing to build the most powerful models. They are also deciding carefully what to release and what to hold back. There is now an invisible boundary between public models and internal systems.

Opus 4.7 sits just below that boundary. It is powerful enough to handle complex tasks but still controlled enough to be considered safe for public use. Mythos, on the other hand, represents the next level of AI capability that is still being tested behind the scenes.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube