I have been following the latest developments in advanced AI systems, and what stands out to me is how quickly they are crossing into cybersecurity capabilities that were once reserved for highly trained specialists. The most striking shift is that these systems are no longer just analyzing code or assisting engineers. They are beginning to demonstrate the ability to both identify and exploit software vulnerabilities at a level comparable to top human experts.

AI Crossing Into Cyber Offense

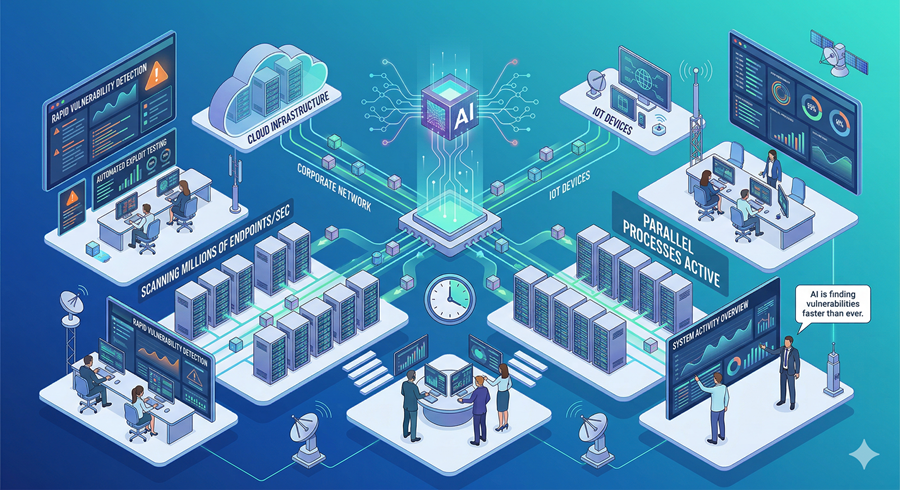

I see a new reality forming where AI systems can actively engage in cyber offensive tasks. Instead of simply detecting weaknesses, they can simulate how those weaknesses might be exploited. At the same time, they can also be used to strengthen defenses. This dual capability makes them powerful but also deeply sensitive from a security perspective.

What makes this more significant is the speed and scale. Unlike humans, these systems can run many parallel attempts at once, scanning multiple environments simultaneously and testing different pathways in seconds.

Emergent Capabilities No One Fully Intended

I find the idea of emergent behavior particularly important here. These models are not explicitly trained to become cyber weapons. Instead, as they grow more capable, unexpected skills appear.

This includes the ability to reason through complex system vulnerabilities, connect patterns across software infrastructure, and simulate attack strategies. It is not a designed feature in the traditional sense. It is something that appears as intelligence scales.

This creates a timing problem. If one system discovers these capabilities, others are likely to follow within months, making it less of an isolated breakthrough and more of a widespread shift.

Why Defensive AI Becomes Just as Important

I also see a clear counterbalance forming. The same capabilities that make AI dangerous in offensive contexts also make it extremely valuable for defense.

These systems can monitor networks, detect intrusion patterns, and respond faster than traditional security teams. In theory, defensive AI could neutralize threats before human operators even become aware of them. The hope is that defensive applications evolve faster than offensive misuse.

This creates a race condition between protection and exploitation, where progress in one area immediately pressures the other.

The National Security and Infrastructure Risk

What concerns me most is not just software systems in isolation, but the infrastructure they connect to. Modern societies run on interconnected digital systems, including banking, energy grids, communication networks, and healthcare platforms.

If AI systems can meaningfully interact with vulnerabilities in these environments, the impact goes far beyond data loss. It could affect real-world services that people depend on daily. That is where the stakes become significantly higher than typical cybersecurity concerns.

Controlled Release and Early Access Strategy

I find it notable that instead of releasing these systems broadly, some organizations are choosing to limit access and share early previews with major technology companies and institutions. The idea is to allow defenders to prepare before these capabilities become widespread.

This approach reflects a shift in thinking. It is no longer just about building powerful systems, but about managing their arrival in a way that reduces systemic risk.

Conclusion

What stands out to me is that AI is no longer confined to productivity or analysis tasks. It is entering domains that directly intersect with security, infrastructure, and national stability. The challenge now is not just capability, but control, timing, and responsible deployment.