Most people focus on AI models, but I have started to see something deeper. The real battle is not just about software. It is about the chips powering everything behind the scenes.

Once I understood that, the entire AI landscape looked different.

How GPUs Became the Backbone of AI

It all begins with GPUs. Originally built for gaming, they turned out to be perfect for AI. The reason is simple. They can process thousands of calculations at the same time.

This kind of parallel computing is exactly what AI needs. Training a model means processing massive amounts of data simultaneously, and GPUs handle that better than traditional CPUs.

That shift became clear around 2012, when a breakthrough in image recognition showed just how powerful this approach could be. Since then, GPUs have become the default engine for training and running AI systems.

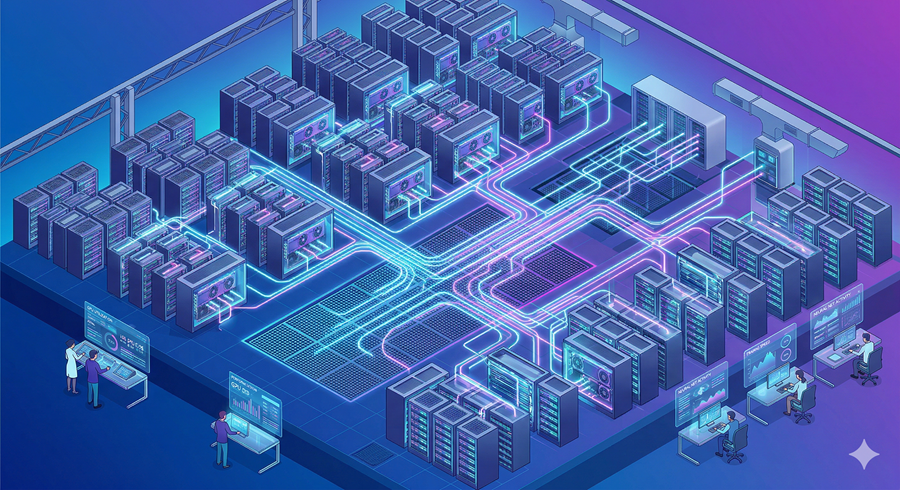

Today, entire data centers are filled with these chips, working together like a single massive brain.

The Shift Toward Specialized AI Chips

But GPUs are no longer the only game in town. I am seeing a strong push toward custom chips designed for specific AI tasks.

These are called ASICs, and unlike GPUs, they are built for one purpose only. That makes them faster and more efficient for certain workloads, especially when it comes to running AI in real-world applications.

The trade-off is flexibility. Once built, they cannot adapt easily. Still, for large companies running AI at scale, the efficiency gains are worth it.

That is why major players are designing their own chips. It gives them control, reduces costs over time, and decreases reliance on external suppliers.

Training vs Inference: Where the Real Demand Lies

There are two main phases in AI. Training and inference.

Training is where models learn. It is expensive and compute-heavy, which is why GPUs dominate here.

Inference is where AI actually gets used. Every time you interact with an AI system, that is inference. And this is where things are shifting fast.

As AI adoption grows, inference is becoming the bigger opportunity. It requires less raw power but happens far more often. That is exactly where specialized chips shine.

This shift is quietly reshaping the entire hardware market.

AI Moving From Cloud to Device

Another trend I find fascinating is the move toward on-device AI.

Instead of relying entirely on cloud servers, AI is starting to run directly on phones, laptops, and even cars. This is made possible by smaller, dedicated processors built into everyday devices.

These chips are cheaper, faster for real-time use, and better for privacy since data does not need to leave the device.

It changes how we think about AI. It becomes more personal, more immediate, and less dependent on internet connectivity.

A Growing and Crowded Battlefield

What makes this space even more intense is how many companies are entering it.

From established chipmakers to cloud giants and startups, everyone wants a piece of the AI hardware stack. Some focus on flexibility, others on efficiency, and some on entirely new architectures.

Yet despite all this competition, one thing is clear to me. The demand is so massive that there is room for many winners.

This is not just a tech trend. It is an infrastructure race, and the companies that control the chips may end up shaping the future of AI itself.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube