I have been watching the AI space evolve quickly, but this moment feels different. It is not just about better models or bigger funding rounds anymore. It is about control. Control over infrastructure, influence, and ultimately how society adapts to what comes next.

What stands out to me is how openly the conversation has shifted. We are no longer asking if advanced AI will reshape society. We are starting to plan for how disruptive that shift might be.

A New Social Contract on the Horizon

One idea keeps surfacing: the current system may not hold. The argument is simple but unsettling. If AI reaches a level where it can outperform humans across most intellectual tasks, then the foundations of work, income, and economic participation could break down.

That is why proposals like public wealth funds are gaining attention. The logic is to give people a shared stake in the value AI creates, rather than concentrating it in a few companies. Alongside that, there is growing discussion around shifting taxes away from human labor and toward automated systems and corporate gains.

It is not just theory anymore. These are early blueprints for a different kind of economy.

Balancing Growth With Stability

I see a clear tension here. On one hand, AI promises massive productivity gains. On the other, it threatens to destabilize the very systems that distribute those gains.

Ideas like a four-day workweek at full pay, stronger safety nets, and automatic economic triggers are attempts to manage that transition. Instead of reacting after disruption hits, the goal is to respond in real time as changes unfold.

Even access to AI itself is being reframed. Not as a luxury, but as something closer to a public utility. If intelligence becomes a core resource, then limiting access could deepen inequality fast.

The Strategy Behind the Vision

At the same time, I cannot ignore the strategic layer behind all of this. The same organizations pushing these ideas are also scaling at an unprecedented pace.

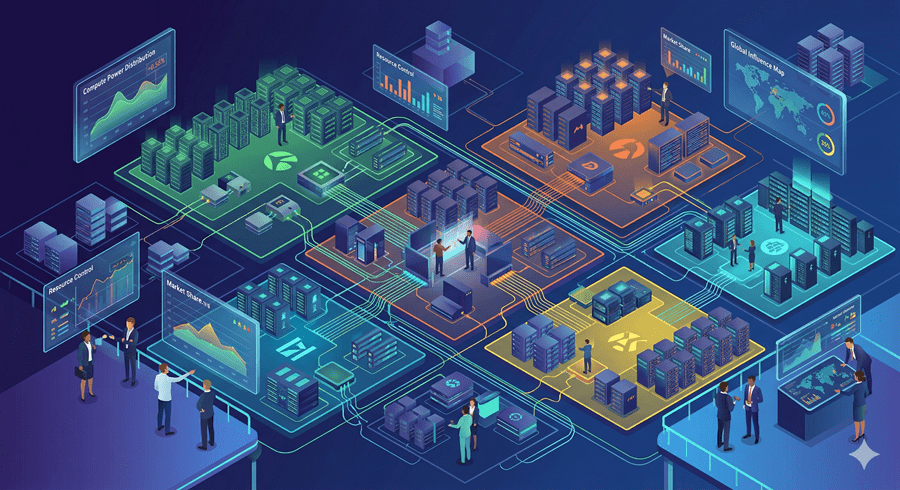

Massive funding rounds, growing user bases, and expanding product ecosystems all point to one thing. AI is becoming infrastructure. The more computing and capability a company controls, the more influence it has over how the future is built.

Bringing everything into one unified system is part of that strategy. One interface that understands intent, executes tasks, and becomes the default layer between humans and digital work.

AGI: Closer Than It Sounds?

There is also a growing confidence, at least from some leaders, that artificial general intelligence is not far away. The claim is that we may already be most of the way there, with rapid improvements pushing AI from partial usefulness to handling the majority of tasks.

But not everyone agrees. Critics argue that current systems are still fundamentally limited, more pattern imitators than true thinkers. They warn that scaling alone may not lead to genuine intelligence.

I find myself somewhere in the middle. Progress is undeniable, but the endpoint is still uncertain.

Real-World Consequences Are Already Here

While the debate continues, the real-world impact has already begun. People are relying on AI for decisions that carry serious consequences, sometimes without fully understanding its limitations.

This is where things get complicated. The technology is advancing faster than the systems meant to regulate or guide it.

What I see now is a race happening on multiple levels at once. A race to build, to fund, to influence, and to define the rules. And beneath all of it is a bigger question.

Not just what AI becomes, but who gets to decide.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube