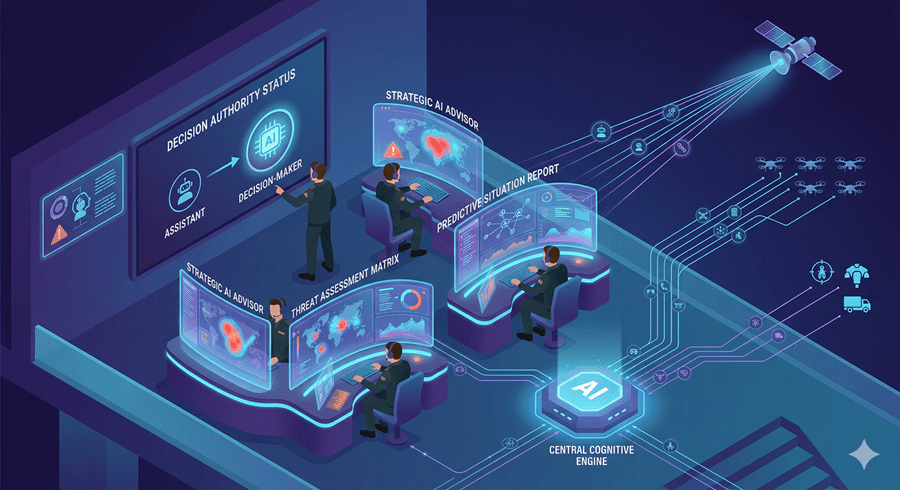

I used to think of AI as a tool that supports decisions. Now I’m not so sure. The line between assistance and authority is starting to blur, especially in warfare.

Today, algorithms are no longer sitting quietly in the background. They are actively shaping outcomes. In some cases, they are influencing decisions that determine who lives and who dies.

From Data Overload to Instant Decisions

Modern warfare generates an overwhelming amount of information. Satellites, drones, intercepted communications, and ground intelligence all produce constant streams of data. No human team can fully process it in real time.

That is where AI stepped in. Systems were trained to scan images, detect patterns, and identify potential threats faster than any analyst ever could. What once took days now takes seconds.

At first, this seemed like progress. Better data processing meant better decisions. But speed changes everything.

The Kill Chain Is No Longer Fully Human

Military operations follow structured steps, from identifying a target to assessing the outcome of a strike. Traditionally, humans controlled every stage.

Now, AI is embedded across that entire process. It can locate targets, track movements, analyze behavior, and even recommend actions. In some cases, it generates prioritized lists of targets based on probability and perceived threat.

I find this shift unsettling. When a machine helps decide what gets targeted, it becomes part of the chain of consequences.

The Dangerous Trade-Off: Speed vs Judgment

Speed is the biggest advantage AI brings to warfare. Acting faster than an opponent can mean survival. It allows forces to anticipate moves and respond instantly.

But there is a cost. The faster decisions are made, the less time there is to question them.

Humans used to have time to verify intelligence, challenge assumptions, and reconsider actions. That time is shrinking. When decisions happen at machine speed, oversight becomes thinner.

And AI is not perfect. It does not truly understand context. It recognizes patterns based on data, and that data can be flawed, outdated, or incomplete.

A small error in a low-stakes setting is manageable. In war, it can be catastrophic.

When Mistakes Become Tragedies

History has shown that even human-led operations can fail due to bad intelligence. But AI introduces a new kind of risk. It can scale mistakes.

If a system misidentifies a target, that error can move quickly through the chain, influencing decisions before anyone has time to intervene. Civilian structures can be mistaken for military assets. Patterns can be misread.

And when that happens, the consequences are irreversible.

This is what makes the current moment so critical. We are not just dealing with faster tools. We are dealing with systems that can amplify both accuracy and error at unprecedented speed.

A Future We May Not Control

For now, humans are still officially in charge. Final decisions are meant to remain with commanders. But I question how long that balance can hold.

As reliance on AI grows, so does the temptation to trust it completely. When systems consistently outperform humans in speed and analysis, deferring to them becomes the easier choice.

That is how control shifts, gradually and almost invisibly.

What concerns me most is not that AI is being used in war. It is that warfare itself is adapting to AI. It is becoming faster, more automated, and less dependent on human judgment.

And once that shift is complete, there may be no way to slow it down.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube