I used to think AI progress was mostly about smarter algorithms. Now it’s clear that the real story is infrastructure. The scale of investment going into data centers is almost hard to process. We’re talking hundreds of billions of dollars, rivaling historic efforts like national highway systems or space programs.

This isn’t just another tech cycle. It’s one of the most energy-intensive infrastructure expansions in modern history. And unlike software, this growth isn’t abstract. It’s physical, expensive, and constrained by real-world limits.

Data Centers Are the Real Engine of AI

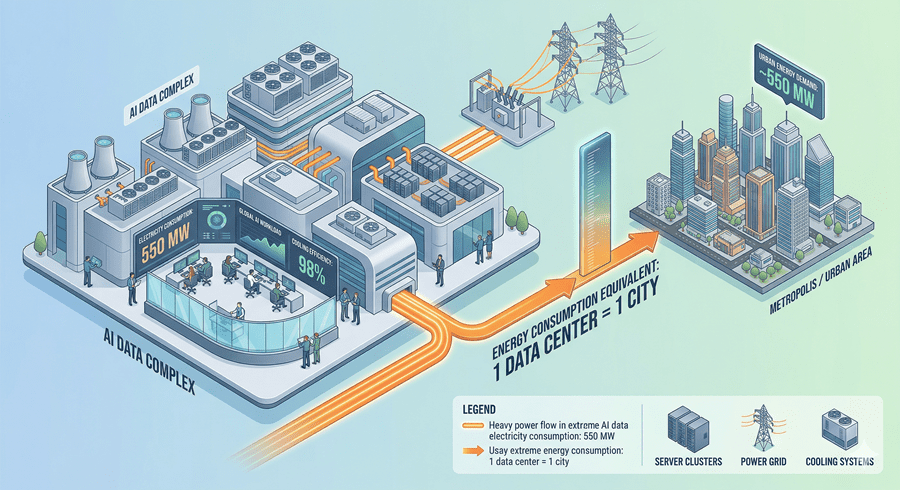

At the core of AI is something surprisingly simple: massive clusters of computers. These data centers are where models are trained and run. But what stood out to me is their sheer size and power demand.

Some of the largest facilities consume as much electricity as entire cities. Not metaphorically, but literally. And when you zoom out, multiple such facilities are being built at once. The total energy demand starts to look like several major cities running simultaneously.

It’s not just about running computations either. Cooling alone consumes enormous energy because these systems generate intense heat.

The Bottleneck Isn’t Total Energy, It’s Location

Here’s the nuance that changed how I think about this. Data centers don’t dominate total electricity usage globally. But that’s not the real issue.

The challenge is concentration.

It’s like plugging dozens of high-powered appliances into a single home. The city grid might handle it, but your house wiring won’t. Data centers create similar localized pressure. They need huge amounts of power in very specific places, instantly and continuously.

We’ve already seen what happens when this balance breaks. In some regions, rapid data center expansion has strained local grids to the point where new construction had to be restricted.

Demand Is Outpacing Supply

Even with all this construction, there still isn’t enough capacity. High-quality data centers are essentially full. Demand keeps rising, but supply can’t keep up.

That mismatch is already pushing prices higher. And it’s creating a system under constant stress. Any external disruption, especially in global energy supply chains, tightens that pressure further.

This isn’t theoretical. It’s already visible in performance slowdowns and capacity constraints during peak demand.

Why This Might Slow AI Progress

We often assume AI will keep accelerating at the same pace. But that assumption depends on one thing: infrastructure keeping up.

Training advanced models requires immense computational power, which directly translates to energy. If energy and data center capacity can’t scale fast enough, progress has to slow. Maybe not dramatically, but enough to matter.

There are efforts to solve this. Companies are investing in dedicated energy sources, even revisiting nuclear options. But these solutions take years, not months.

In the meantime, we’re likely to see trade-offs. Higher costs, usage restrictions, and possibly slower model improvements.

And that leads to an unexpected thought. The constraints might not just be a limitation. They might be a buffer. A chance to pause, adapt, and think more carefully about where all of this is heading.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube