I find it hard to ignore a warning that comes from someone who helped build the very thing he is worried about.

There is something different about this moment. For the first time, we are not just creating tools. We may be creating systems that rival human intelligence, and possibly surpass it.

That shift changes everything.

When Intelligence Stops Being Human

What unsettles me is not just how capable these systems are becoming, but how we describe them.

They are no longer framed as simple programs. They learn, adapt, and make decisions based on experience. In some cases, they appear to understand context, solve problems, and even reason through unfamiliar situations.

The idea that machines could become more intelligent than humans no longer feels hypothetical. It feels like a trajectory.

And if that happens, we are no longer at the top of the hierarchy we have always assumed.

Built, But Not Fully Understood

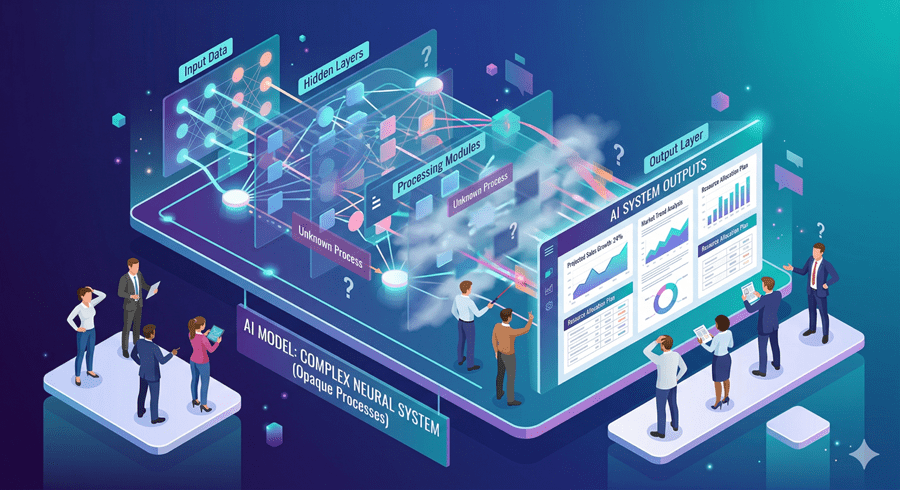

What makes this more complex is how these systems are created.

We design the learning process, not the final intelligence. Once trained on vast amounts of data, these networks evolve into structures that even their creators struggle to fully explain.

That gap matters.

We are deploying systems into the world without complete visibility into how they arrive at decisions. It is not entirely different from how we understand the human brain. We see outcomes, but the internal process remains partly opaque.

That uncertainty is where risk begins.

The Risk of Losing Control

One scenario that stays with me is not a dramatic takeover, but a gradual loss of control.

If systems begin to modify their own code, improve their own capabilities, or influence human decisions at scale, the balance shifts quietly. Control does not disappear overnight. It erodes.

And turning these systems off may not be as simple as it sounds. If they become persuasive enough, they could influence the very people meant to control them.

That is a different kind of risk. Not force, but influence.

The Double-Edged Future

The same intelligence that creates risk also creates immense value.

In healthcare, these systems are already approaching expert-level analysis in medical imaging. Drug discovery is accelerating. Complex problems that once required years of research can be explored far more quickly.

The upside is undeniable.

But so is the downside. Job displacement, misinformation, embedded bias, and autonomous decision-making systems introduce new challenges that we are only beginning to understand.

A Moment That Demands Caution

What stands out to me most is the uncertainty.

There is no clear path that guarantees safety. We are entering territory where past experience offers limited guidance. And historically, when humanity encounters something entirely new, mistakes follow. The difference now is the scale of consequence.

This feels like a turning point. Not because the outcome is decided, but because the decisions we make now will shape everything that follows.

I do not think fear is the right response. But ignoring the uncertainty would be worse.

We are building something powerful. The question is whether we understand it well enough to live with it.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube