For a long time, I’ve been fascinated by the idea of running powerful AI models locally. Something is compelling about having full control. No external servers, no data leaving your machine, no uncertainty about where your inputs end up.

But there’s always been a tradeoff. The more powerful the model, the more demanding it becomes. Memory, compute, and speed quickly turn into hard limits.

At some point, portability loses to performance.

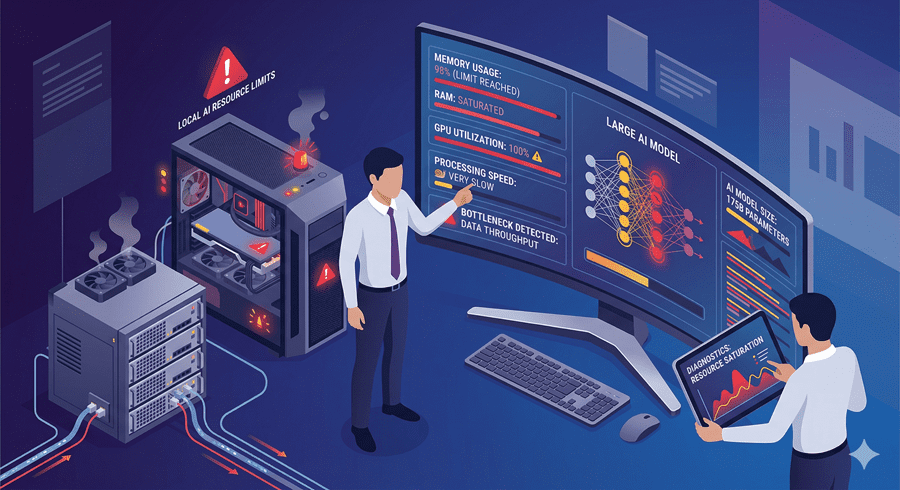

Where local setups start to break

Even with high-end hardware, there’s a ceiling. Smaller models run fine, but as soon as you move into larger ones, everything slows down. Memory fills up, processing drags, and the experience becomes impractical.

This creates a gap. You can either run small models locally with full privacy, or rely on external systems for real power. That tradeoff used to feel unavoidable.

A quiet shift in how we access power

What’s changing now isn’t just model size. It’s how we connect to them. Instead of forcing everything onto one machine, I can run heavy models on a more powerful system somewhere else and access them as if they were local.

The experience stays simple. The interface feels the same. But the actual computation happens on hardware that can handle it.

It’s a subtle shift, but it removes one of the biggest constraints in working with AI.

Why this matters more than it seems

This isn’t just about convenience. It changes how I think about building and using AI tools.

I no longer need to carry the most powerful machine with me. I just need access to one. That means I can work from lightweight devices while still tapping into high-end performance when needed.

It also preserves something important. Control. The models can still be mine, running on systems I manage, rather than relying entirely on third-party platforms.

That balance between power and ownership starts to feel achievable.

The real direction of AI workflows

What these points point to is a broader shift in how AI will be used. Not purely local. Not purely cloud. Something in between.

A networked approach where compute lives where it makes the most sense, but access is seamless. Where switching between models, machines, and capabilities becomes instant.

In that world, the limitation is no longer hardware in your backpack. It’s how well you design your setup, and that changes the game.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube