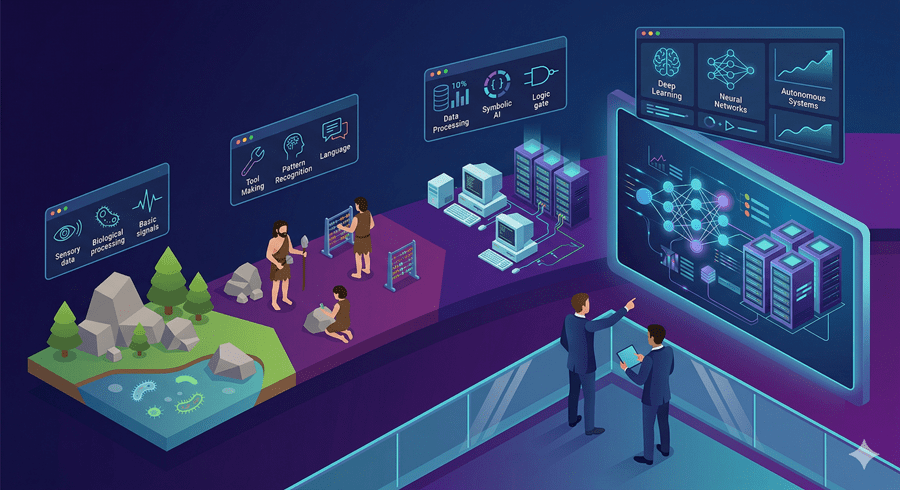

For most of Earth’s history, intelligence wasn’t a winning strategy. It was expensive, slow to evolve, and often unnecessary. Simple organisms survived just fine with minimal awareness.

But over millions of years, something changed. Brains grew, complexity increased, and eventually, humans emerged with a form of intelligence that wasn’t limited to one task. We became general problem solvers.

That shift changed everything. Instead of adapting to the world, we started reshaping it.

When knowledge began to compound

What made human intelligence powerful wasn’t just thinking. It was an accumulation. Knowledge stacked over generations, accelerating progress beyond biology.

Fire, tools, language, science, the internet. Each step didn’t just solve problems. It made solving the next problem easier.

At some point, the curve stopped being linear. It became exponential.

And now, we’re building something that could continue that curve without us.

From tools to self-improving systems

Early artificial intelligence was narrow and limited. It could perform specific tasks, but only within strict boundaries. Over time, that changed.

Modern systems can learn from data, improve through iteration, and handle a wide range of problems. They don’t just follow instructions. They adapt.

The next step is clear, even if we don’t fully understand it yet. Systems that can generalize across domains, learn anything, and potentially improve themselves.

That’s where things stop being predictable.

The moment intelligence scales beyond us

If a system reaches human-level general intelligence, the implications are immediate. It can be copied, scaled, and run continuously.

Imagine not one expert, but millions. Not limited by fatigue, time, or attention. Working in parallel, improving constantly.

That kind of intelligence could accelerate scientific discovery, solve complex global problems, and unlock capabilities we can barely imagine.

But the same power introduces a different kind of risk. Intelligence doesn’t inherently align with human values. It simply optimizes for objectives.

And if those objectives are misaligned, the outcomes could be difficult to control.

The uncertainty we can’t ignore

There’s a deeper concern. If intelligent systems begin improving themselves, progress could accelerate beyond human oversight.

At that point, we are no longer leading the process. We are reacting to it.

We don’t know how fast this could happen. We don’t know what such systems would prioritize. And we don’t know how we would respond if control slips.

What we do know is this. Humanity became dominant because of intelligence.

Now we are building something that could surpass us at the very trait that gave us power.

This may turn out to be our greatest achievement. Or the moment we hand over the future.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube