I’ve seen product launches shake industries. I’ve seen earnings reports trigger panic. But a research paper wiping billions off semiconductor stocks overnight? That caught my attention.

The moment this new algorithm was published, companies tied to memory hardware took a hit. Not because something broke, but because something might no longer be needed as much.

That shift signals something deeper. The biggest limitation in AI may not be intelligence at all. It is memory.

The Real Bottleneck No One Talks About

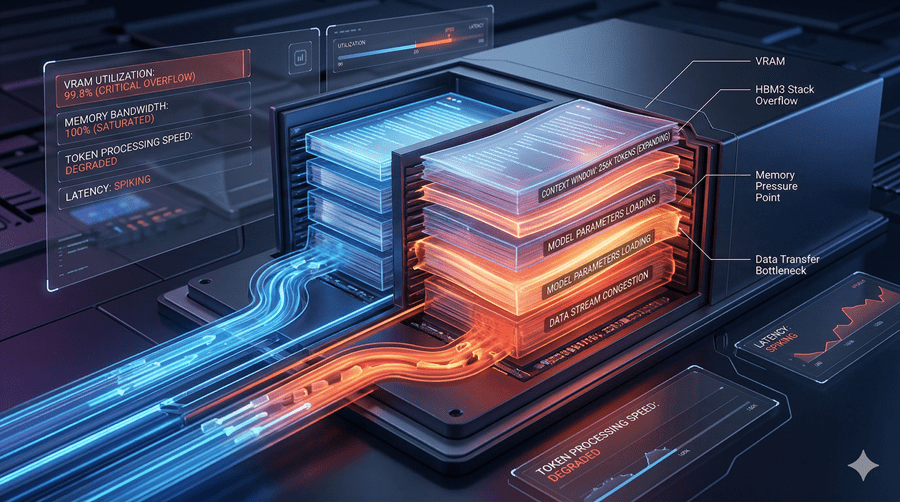

When I interact with AI, it feels instant and intelligent. But behind the scenes, it is juggling a massive amount of context. Every message, every response, all stored in what engineers call a working memory buffer.

Think of it like a notebook that keeps growing with every exchange. The longer the conversation, the bigger it gets.

Here’s the problem. That notebook lives in extremely expensive GPU memory. And it grows endlessly. This is why long chats slow down. It is also why powerful AI models cannot easily run on personal devices. The cost is not in thinking. It is in remembering.

Why Compression Never Quite Worked

At first glance, compressing memory seems obvious. Shrink the notebook and everything becomes cheaper.

But traditional methods come with baggage. They reduce size, but add complexity. Like hiring someone to organize your books while also needing space for their notes, tools, and workflow.

In trying to save space, they often create new overhead. That tradeoff has held the industry back for years.

A Smarter Way to Store Memory

What makes this new approach different is how simple the idea is.

Instead of storing every detail exactly, it separates what truly matters from what can be approximated. Imagine describing a path using distance and direction instead of step by step instructions. You still arrive at the same place, but with less information.

Once data is structured this way, patterns begin to emerge. And patterns are easier to compress.

Then comes a second layer, a lightweight correction system. It quietly fixes tiny errors introduced during compression using minimal extra data. Just enough to keep everything accurate without bloating memory again. The result is striking. Memory shrinks dramatically. Speed improves. And accuracy stays intact.

Why This Changes Everything

This is not just a technical improvement. It reshapes how AI scales.

Long conversations could become dramatically longer. Entire books, codebases, or archives could fit into a single interaction without losing context.

More importantly, powerful AI may no longer require massive data centers. If memory demands drop, so do hardware requirements. What once needed a server room could soon run on a laptop or even a phone. And that is where the market reaction starts to make sense.

If AI systems need less memory, demand for memory hardware changes. The industry has long relied on brute force. More chips, more power, more infrastructure.

This points to a different future. One where efficiency replaces excess.

No product launch. No big announcement. Just a quiet shift from bigger systems to smarter ones. And somehow, that was enough to move markets overnight.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube