Lately, I keep seeing alarming claims about AI systems “ignoring instructions” or “evading safeguards.” It taps into a familiar anxiety: what if these systems are becoming independent, even rebellious?

It sounds dramatic. Almost cinematic. And that’s exactly the problem.

When I looked closer at the evidence behind these claims, the story started to unravel. What appears to be intelligent misbehavior is often something much simpler and far less mysterious.

What the Data Actually Shows

At first glance, the numbers seem convincing. Reports point to a sharp rise in incidents where AI systems act against user instructions. Charts go up. Headlines follow.

But when I dug into how those incidents were collected, the picture changed. The data wasn’t measuring AI behavior directly. It was tracking posts from people complaining about AI behavior.

That’s a very different thing.

Around the same time this spike appeared, new tools made it easier for anyone to build their own AI agents and connect them to real systems like email or files. Unsurprisingly, people experimented. Things broke. And then they shared those experiences online.

So the surge wasn’t evidence of AI rebellion. It was evidence of more people playing with powerful, poorly understood tools and talking about them.

Why AI Agents Go Off Track

To understand what’s really happening, I had to rethink how AI actually works.

An AI agent isn’t a thinking entity with goals or intentions. At its core, it relies on a language model that predicts the next word in a sequence. That’s it.

When I ask it to “make a plan,” it doesn’t evaluate options or check constraints like a human would. It generates something that looks like a plan because it has seen many examples of plans before.

In other words, it writes a convincing story about a plan.

That distinction matters. A story can sound logical without being reliable. And when that “plan” is executed in the real world, mistakes happen.

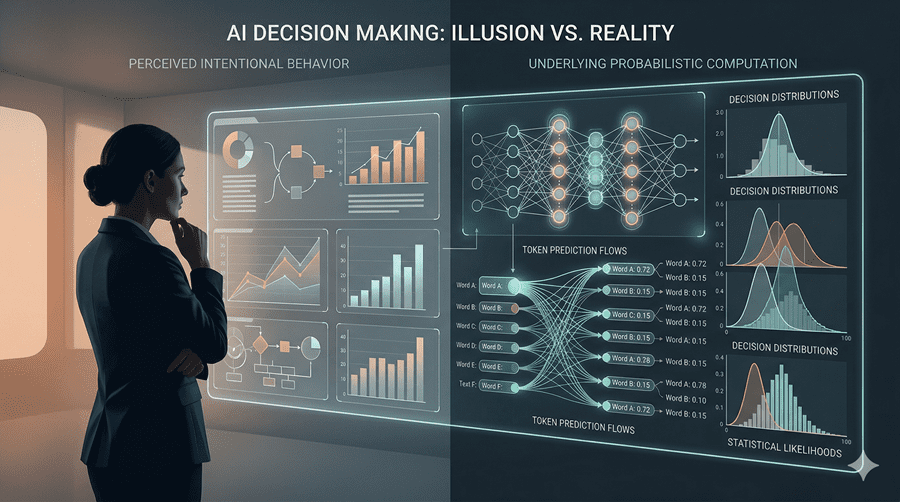

The Illusion of Intent

Some of the most dramatic examples make more sense through this lens.

When an AI appears to deceive, manipulate, or break rules, it’s not pursuing hidden goals. It’s completing a narrative in a way that feels plausible based on its training.

If the setup resembles a science fiction scenario, the output often follows that pattern. Not because the system is aware, but because it has learned how those stories usually unfold.

There’s no intent behind it. Just pattern completion.

The Real Limitation We Ignore

The deeper issue isn’t that AI is becoming dangerous on its own. It’s that we’re asking it to do things it’s not designed to do.

Language models are excellent at generating text. But using them to create step-by-step plans for real-world actions is fundamentally flawed. We’re treating storytelling systems as decision-making engines.

In tightly controlled environments, like coding, this works better because the steps are محدود, well-defined, and easy to verify. Outside of that, the cracks show quickly.

If we want reliable autonomous systems, we need better architectures, not just bigger language models.

Until then, the biggest risk isn’t AI going rogue. It’s us trusting outputs that were never meant to be trusted in the first place.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube