I couldn’t believe it at first. A research paper, not a product launch or earnings report, wiped billions off semiconductor stocks overnight. Companies like Micron and Western Digital took a hit, all because of a new idea.

That idea challenges something fundamental in AI. For years, the assumption has been simple: better AI needs more hardware. More chips, more memory, more power. But what if that assumption is wrong?

The Hidden Bottleneck in AI

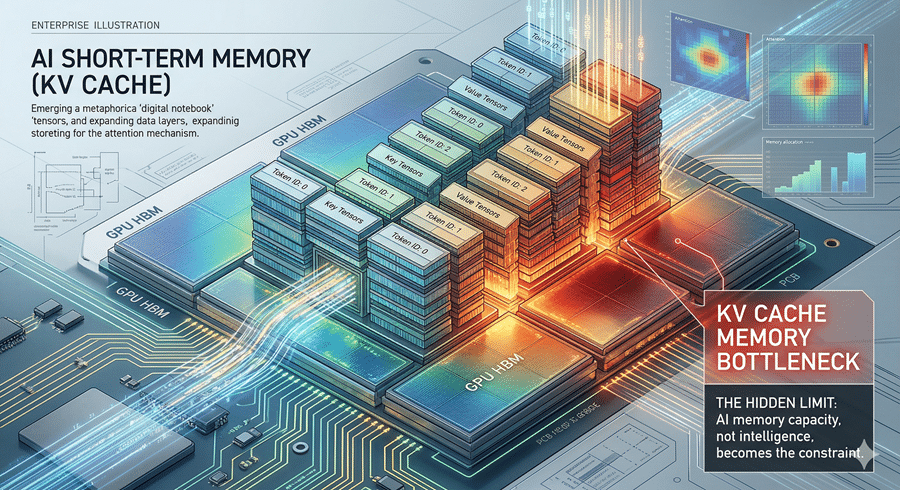

Most people think AI struggles with intelligence. That’s not the real issue. The real constraint is memory, especially short-term memory.

Every time I interact with an AI, it has to remember the entire conversation. Not just the last message, but everything. This running context lives in what engineers call a KV cache. I think of it as a notebook that keeps filling up with every exchange.

The problem is that this notebook grows endlessly and sits on extremely expensive GPU memory. That’s why long conversations slow down and why powerful models can’t easily run on personal devices. It’s not the thinking that costs the most. It’s the remembering.

Why Compression Never Really Worked Before

Shrinking that notebook seems like an obvious solution. And many have tried. But traditional compression comes with baggage.

It’s like hiring a librarian to organize your books. You save space, but now you need extra systems to manage the librarian’s work. The overhead cancels out much of the benefit.

This is where things get interesting.

A Smarter Way to Remember Less

The new approach reimagines how memory is stored. Instead of keeping every detail equally precise, it separates what truly matters from what doesn’t.

I like to think of it as directions. You can describe a route as exact steps or as a general direction plus distance. The destination stays the same, but the second version is easier to compress because patterns emerge.

That’s the core insight. When data becomes predictable, it can be stored far more efficiently.

Then comes a clever second layer. Small errors from compression are corrected using tiny signals, almost like a built-in spell check. These corrections cost almost nothing in storage but prevent mistakes from piling up.

The result is striking. Memory shrinks dramatically without losing meaningful accuracy.

What Does This Change for All of Us

This isn’t just a technical improvement. It changes what AI can do in everyday life.

First, conversations get longer and more coherent. If memory becomes cheaper, AI can track far more context without slowing down.

Second, powerful AI could run locally. Models that once needed data centers might soon work on laptops or even phones.

Third, and most disruptive, the economic shift. If AI needs less memory, the demand for memory hardware drops. That’s why the market reacted so quickly. Investors aren’t just seeing a new technique. They’re seeing a future where efficiency beats scale.

What fascinates me most is how quiet this shift was. No flashy announcement. No product reveal. Just a paper.

And yet, it signals something bigger. The era of solving AI problems by throwing more hardware at them might be ending. Smarter algorithms are starting to win.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube