I’ve been watching AI hardware costs spiral out of control. Memory shortages, expensive GPUs, and overpriced laptops have made running advanced models feel increasingly out of reach. So when I came across a method claiming to cut memory usage dramatically while speeding things up, I paused. Not because of the hype, but because of the implications.

If true, this isn’t just an upgrade. It changes who gets to use powerful AI.

What’s Actually Changing Under the Hood

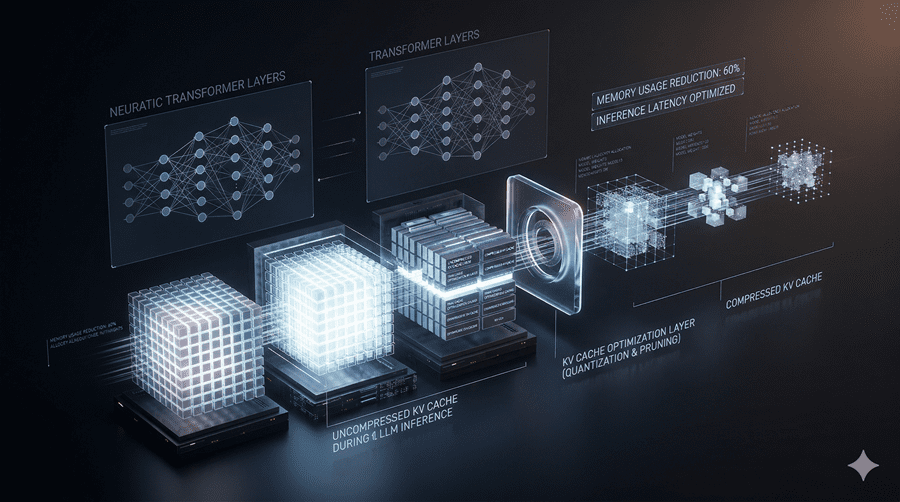

At the core of this breakthrough is something called the KV cache. I think of it as the short-term memory of an AI system. Every conversation, document, or piece of code gets translated into dense numerical representations stored here.

The problem is simple. These numbers take up a lot of space.

The solution sounds deceptively basic. Trim the precision of those numbers. But doing that naively destroys information. The system starts to lose context and produces worse outputs.

The clever twist is this. Before reducing precision, the data is rotated in a way that spreads its information evenly. Instead of losing critical details, the system loses a tiny bit everywhere. The result is far more stable.

Then comes another layer. A mathematical technique compresses the data while preserving relationships between points. So even after shrinking, the structure still holds.

None of these ideas is new. But together, they work surprisingly well.

Does It Actually Work in Practice?

I didn’t trust the initial claims. So I waited.

When independent researchers started testing it, the results became clearer. Memory usage dropped by around 30 to 40 percent in realistic scenarios. That alone is impressive.

But what really caught my attention was something unexpected. The system also became faster. In some cases, prompt processing improved by roughly 40 percent.

That’s unusual. Normally, you trade speed for efficiency. Here, both improved.

It’s not the extreme numbers you might hear in headlines. Those come from ideal conditions. But even the real-world gains are significant.

Where This Makes the Biggest Difference

This method shines when dealing with large inputs. Think massive documents, long conversations, or complex codebases.

In those cases, memory demands grow quickly. Cutting even a few gigabytes can make the difference between running a model locally and not running it at all.

For me, that’s the real story. It lowers the barrier to entry.

Why Not Everyone Is Celebrating

As exciting as this is, there’s pushback.

Some researchers argue that parts of this approach resemble earlier work and deserve more acknowledgment. Others feel the discussion around prior methods could have been deeper.

The technique has been accepted academically, but not without debate. And honestly, that’s a good thing. It means the field is still questioning itself.

What I find most interesting is this. The breakthrough didn’t come from inventing something entirely new. It came from combining well-known ideas more smartly.

That’s a reminder I keep coming back to. Progress doesn’t always mean reinventing everything. Sometimes it means finally putting the pieces together.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube