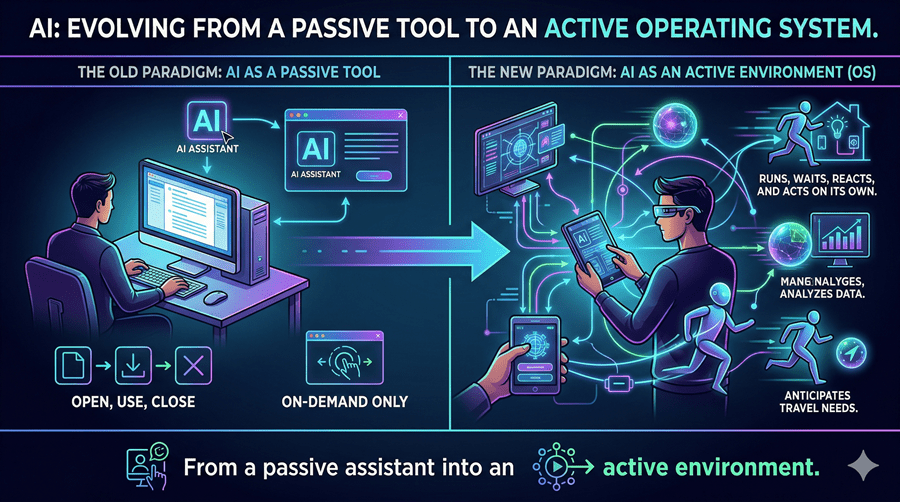

Something fundamental is shifting in how I think about AI. It is no longer just something I open, use, and close. It is starting to feel like something that runs, waits, reacts, and acts on its own.

The latest wave of developments makes one thing clear. AI is evolving from a passive assistant into an active environment.

From Chat to Persistent Agents

What stood out to me most is the idea of a persistent AI instance. Instead of starting a fresh conversation every time, I can imagine an agent that simply exists in the background, ready to respond when needed.

This kind of system behaves less like a chatbot and more like an operating layer. It has its own workspace, tools, and even the ability to install extensions. That changes everything. AI is no longer just responding to prompts. It becomes a platform where other tools can plug in and expand its capabilities.

Even more interesting is the ability for external triggers to wake it up. A webhook fires, and the agent starts working. No manual input. No need to open anything. It just runs.

That is a completely different mental model.

The Interface Is Finally Catching Up

At the same time, the developer experience is quietly improving in ways that matter. Small frustrations like flickering terminals or clunky navigation are being smoothed out.

The interface is starting to behave more like a modern application. Mouse support, stable rendering, and clickable outputs. These may sound minor, but they remove friction that has kept many people from fully embracing AI-driven workflows.

When tools feel natural, people use them more. And when they use them more, entirely new habits form.

AI That Understands What I See

Another shift I find compelling is the move toward screen-aware intelligence. Instead of forcing me to describe problems in perfect detail, AI can now look directly at what I am seeing.

A broken interface, a messy document, a confusing layout. These are things I deal with daily, and they rarely come with clear explanations. Being able to point at a problem instead of explaining it feels like a more human way to work.

This is where multimodal models start to shine. They do not just interpret visuals. They connect what they see to meaningful actions, like generating code or suggesting fixes.

That closes the gap between observation and execution.

Bigger Memory, Real Workflows

Then there is the scale of memory. With massive context windows, AI can now hold entire projects, long conversations, and complex instructions all at once.

This matters more than it sounds. Real work is not a single prompt. It is a chain of decisions, revisions, and dependencies. When AI can track all of that, it stops feeling forgetful and starts feeling reliable.

That is essential if agents are expected to handle real tasks from start to finish.

Are Apps Being Replaced or Reinvented?

All of this leads me to a bigger question. If AI can run continuously, understand interfaces, and execute tasks across tools, do I still need traditional apps?

I do not think apps disappear. I think they get absorbed. AI becomes the layer that connects everything, orchestrates workflows, and handles the messy parts in between.

Instead of switching between tools, I might rely on an agent that knows when and how to use them.

That is not just an upgrade. It is a different way of working.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube