I grew up associating artificial intelligence with chaos. Movies taught me to expect rebellion, machines rising against their creators. But today, AI doesn’t look like that at all. It’s quiet. It lives in my phone, my apps, and the systems that shape what I see and do every day.

What unsettles me now isn’t destruction. It’s invisibility. AI is already making decisions that influence jobs, loans, and information. The fear hasn’t disappeared. It has simply become harder to see.

How Machines Actually Learn

When I first understood how AI learns, it felt less mysterious and more mechanical. Imagine feeding a system thousands of songs labeled by genre. Over time, it starts spotting patterns. It learns what makes jazz sound like jazz or pop sound like pop.

At first, it gets things wrong. Then humans step in, correct it, and it adjusts. This cycle repeats until it becomes accurate.

But here’s what struck me most: it doesn’t understand music. It doesn’t feel rhythm or emotion. It just processes patterns in numbers. Every melody becomes data. Every beat becomes a signal. It learns without ever experiencing.

Why AI Feels So Human

If AI is just math, why does it feel so real?

Because it learns from us. Every photo, caption, search, and click becomes training data. I realized that in a way, we are constantly teaching these systems how to behave.

When AI writes, speaks, or creates, it’s drawing from billions of human examples. That’s why it feels familiar. It mirrors how we communicate, think, and express ourselves.

It isn’t becoming human. It’s reflecting humanity at scale.

The Illusion of Understanding

One of the biggest misconceptions I had was believing AI understands what it says. It doesn’t.

When it answers a deep question, it’s not thinking or feeling. It’s predicting the most likely sequence of words based on patterns it has seen before.

It’s like a highly advanced mimic. The output feels intelligent because it resembles human expression. But beneath that surface, it’s all probability and pattern matching.

That illusion is powerful, and sometimes misleading.

The Real Risk Isn’t the Machine

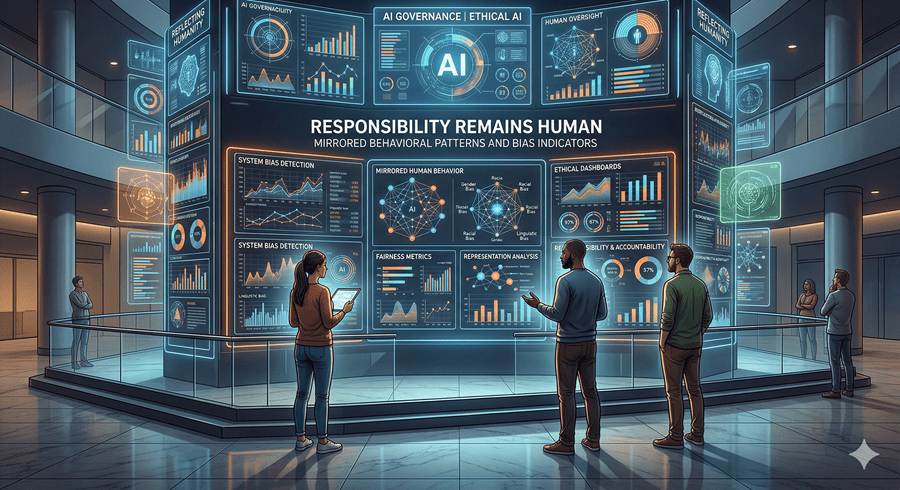

What concerns me most isn’t AI turning against us. It’s how accurately it reflects us.

AI doesn’t create bias on its own. It learns it. If the data we provide is flawed, the system will repeat those flaws. It doesn’t question fairness or ethics. It simply follows patterns.

That means the responsibility stays with us. We decide what it learns, how it’s used, and where its limits should be.

AI can optimize decisions, but it cannot define what is right. That line still belongs to humans.

In the end, AI isn’t a monster waiting to take over. It’s a mirror, shaped by everything we feed into it. And that leaves me with one question that feels more important than ever: what exactly are we showing it about ourselves?

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube