For years, I believed that intelligence revealed itself through language. If a system could explain ideas, write clearly, and hold a conversation, it felt natural to assume it truly understood. After all, language is how we humans display intelligence to each other. But I’ve started to see the flaw in that thinking. Explanation is not the same as understanding. It only looks that way because they often overlap.

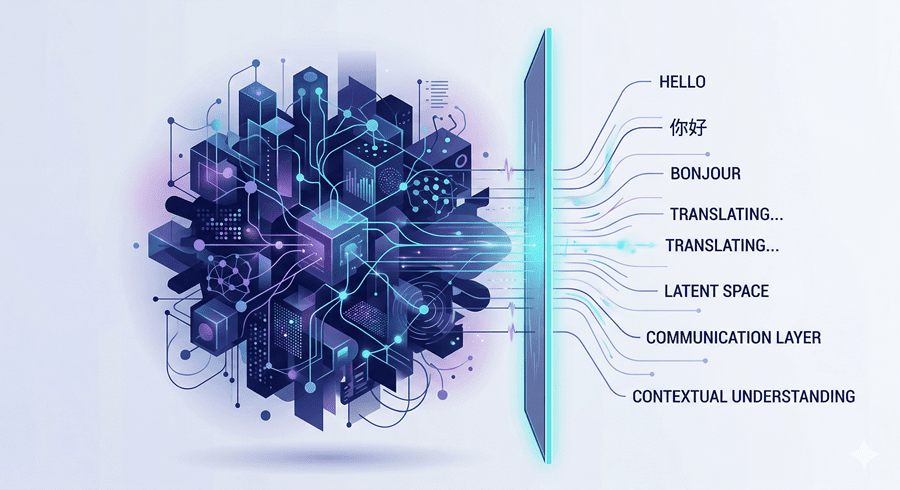

Now, a subtle but important shift is happening. New AI systems are emerging that do not depend on language to think. They do not narrate their reasoning or construct thoughts word by word. Instead, language is becoming just a surface layer, not the core of intelligence.

The Illusion of Fluency

I used to equate clarity with depth. The more articulate a system sounded, the more intelligent I assumed it was. But language models operate in a very specific way. They generate one word at a time, without fully knowing the final outcome in advance. Thinking and speaking are fused together.

This works well for writing and conversation, but it creates limitations. When intelligence is forced to express itself continuously, it becomes slower and more fragile. Errors stack up, and the system struggles to maintain a stable internal understanding without constantly restating it.

We didn’t just build tools that use language. We built systems where language became the foundation of thought.

Why the Real World Breaks This Model

The real world does not operate in sentences. It moves continuously, without waiting for descriptions. Actions unfold before they can be labeled, and cause and effect play out in real time.

This creates a mismatch. A system might describe a moment perfectly but fail to understand what is happening over time. It can identify objects yet miss the action entirely. Tasks like driving or planning demand a sense of time and causality, not just accurate descriptions.

As long as intelligence is tied to language, it inherits these limitations.

Thinking Without Words

A different approach flips the process. Instead of predicting words, these systems focus on meaning first. They build internal representations of what is happening without translating everything into text.

I find this idea surprisingly intuitive. Humans do not narrate every thought. We understand first, then explain if needed. These systems follow a similar pattern. Understanding exists silently, and language becomes optional.

This shift makes intelligence faster and more stable. It no longer resets at the end of each sentence.

The Power of Time and Patience

One of the biggest differences appears over time. Traditional systems react moment by moment. They make quick guesses and move on. But meaning-based systems accumulate understanding.

They start with uncertainty, adjust as new information comes in, and gradually settle on a clearer interpretation. This mirrors how I experience the world. I observe, revise, and only commit once things make sense.

Waiting, it turns out, is part of intelligence.

Language Finds Its Proper Role

This shift does not make language models irrelevant. It clarifies their purpose. Language becomes a translator, not the thinker itself. It helps communicate internal understanding rather than create it.

That distinction changes everything. Intelligence no longer needs to speak constantly to exist. It can operate quietly, only using language when necessary.

The most profound realization for me is this: intelligence is not defined by how well it speaks, but by how deeply it understands. And as AI evolves, the systems that matter most may not be the ones that sound impressive, but the ones that no longer need to.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube