Artificial intelligence often feels objective. It runs on data, mathematics, and code, which makes many people assume its decisions are neutral. But algorithms are created by humans and trained on human data. That means the patterns they learn can mirror the biases that already exist in society.

This phenomenon is known as algorithmic bias. It does not always appear intentionally, but it can shape how AI systems behave in ways that affect real people.

Understanding how bias enters AI systems is one of the most important challenges in modern technology.

Bias Versus Discrimination

Bias itself is not always harmful. Humans constantly rely on patterns to make quick decisions. Our brains simplify information so we can navigate a complex world.

Problems begin when those patterns lead to unfair treatment. In many societies, laws exist to prevent discrimination against protected groups based on characteristics such as race, gender, or age. When AI systems replicate harmful patterns in data, they risk reinforcing the same inequalities those laws attempt to prevent.

Recognizing the difference between natural pattern recognition and discriminatory outcomes is essential when building responsible technology.

When Training Data Reflects Society

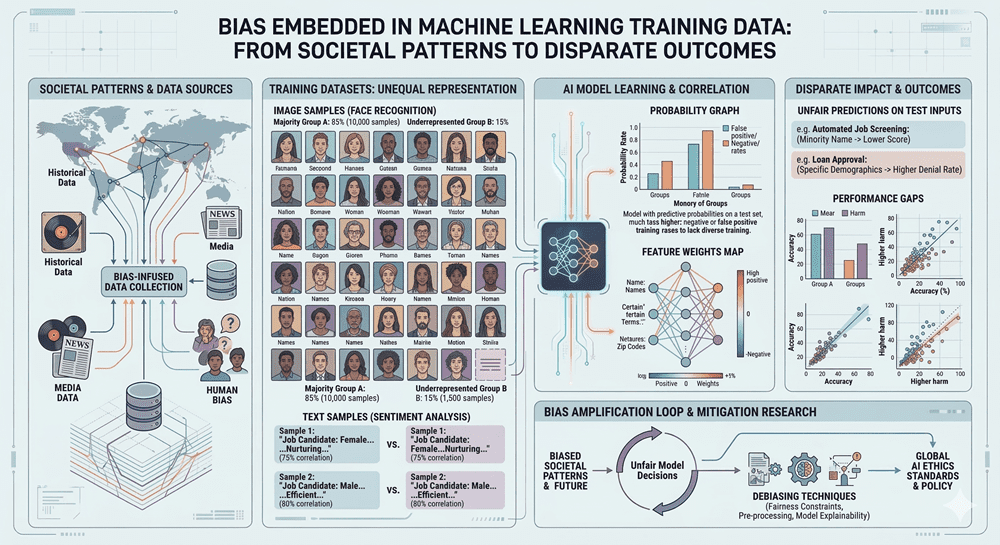

One major source of algorithmic bias is the data used to train AI systems. If the data reflects social stereotypes, those patterns can become embedded in the model.

For example, language or image datasets may associate certain professions with particular genders. A search for nurses might display mostly women, while searches for programmers might show mostly men. These patterns do not necessarily reflect reality, but rather historical and cultural trends present in the data.

AI systems struggle to recognize that these patterns may represent bias rather than truth. Instead, they simply learn and repeat what they observe.

The Problem of Missing Representation

Bias can also appear when training datasets lack diversity. If a dataset contains far more examples of one group than another, the system may perform poorly for people who are underrepresented.

This issue has appeared in facial recognition systems, where models trained mostly on lighter skin tones have struggled to accurately identify individuals with darker skin tones. In everyday situations, this can lead to frustrating experiences, such as repeatedly failing identity verification systems.

The root problem is not malicious design. It is simply incomplete data. Yet the consequences still affect people in real ways.

Simplifying Complex Human Qualities

Another challenge arises when AI attempts to evaluate complicated human abilities using simplified metrics. Many aspects of human behavior are difficult to measure with numbers.

Consider automated systems designed to grade essays. Good writing involves creativity, reasoning, structure, and clarity. These qualities are difficult to quantify directly, so algorithms often rely on easier indicators such as sentence length, vocabulary, or grammar patterns.

Because of this shortcut, some systems can be fooled by essays that look sophisticated but make little sense. The algorithm measures what it can easily count rather than what truly matters.

Feedback Loops That Reinforce the Past

AI systems can also create feedback loops. When predictions influence future data, the system may reinforce its original assumptions.

Predictive policing tools provide a well-known example. If an algorithm directs law enforcement to patrol certain neighborhoods more frequently, more arrests may occur there simply because those areas receive more attention. That new arrest data then feeds back into the system, strengthening the prediction that crime happens there.

Over time, the model may amplify patterns that originated from earlier social inequalities.

Why Awareness Matters

Algorithmic bias does not mean artificial intelligence is useless. AI can be incredibly powerful when designed carefully. But it does mean we should treat algorithmic recommendations critically rather than assuming they are always correct.

Transparency, diverse datasets, and ongoing monitoring are essential steps toward reducing bias in AI systems. Ultimately, humans remain responsible for how these technologies are developed and deployed.

Understanding the limitations of algorithms helps ensure that the systems shaping our future support fairness rather than silently reinforcing old biases.