For decades, computers have played games like Pong as a benchmark for artificial intelligence. The rules are simple. Move a paddle, hit a ball, and keep it from passing you.

But recently something unexpected joined the game. Not a human. Not a traditional computer.

A small cluster of living human brain cells learned to play Pong.

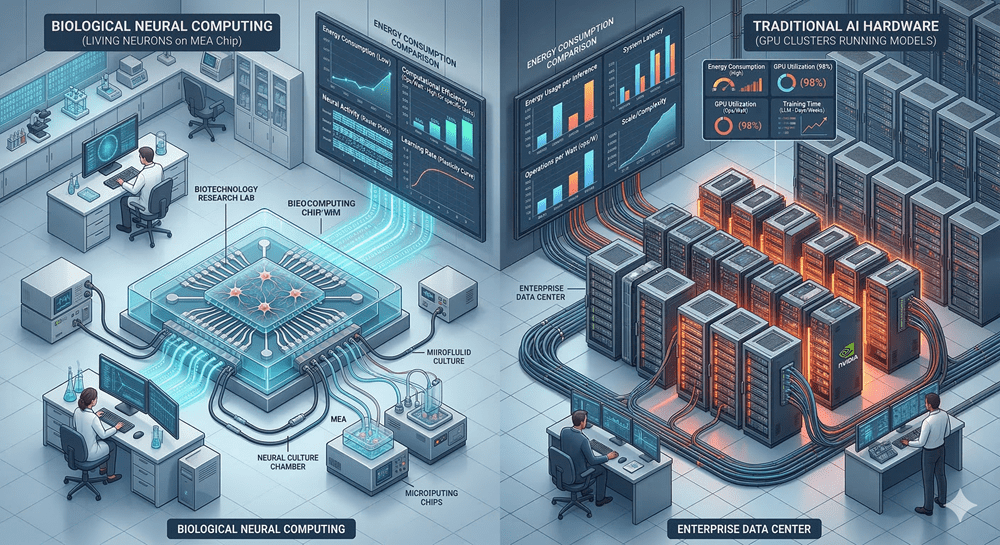

This unusual experiment might sound like science fiction, yet it represents an emerging field known as biocomputing, where living neurons are combined with digital hardware to explore entirely new forms of intelligence.

The Experiment That Changed the Conversation

Researchers at Cortical Labs created a system called DishBrain. They grew roughly 800,000 neurons on a silicon chip and connected them to a digital version of the classic arcade game Pong.

Neurons naturally communicate through electrical signals. By sending and receiving those signals through the chip, scientists could translate the game into patterns the cells could sense.

The system had three sections. One sensory region transmitted information about the ball’s position. Two motor regions allowed the neurons to control the paddle, moving it either up or down depending on which signals fired.

The question was simple. Could a clump of neurons learn to interact with its environment?

Within minutes, the cells began improving their performance.

Why Brain Cells Learn So Efficiently

Traditional AI models often require enormous datasets and massive computing power. Biological brains operate very differently.

Human brains contain about 86 billion neurons forming more than 100 trillion connections. Despite that complexity, the entire system runs on roughly 20 watts of power, about the same as a small light bulb.

In comparison, large supercomputers used for AI training can consume tens of megawatts.

That energy gap is one reason researchers are fascinated by biological computing. Living neurons evolved to learn from limited information and adapt quickly to changing environments.

Some scientists believe combining biology with computing could dramatically reduce the energy required for AI.

The Limits of Silicon Computing

Modern computing relies on silicon chips packed with billions of transistors. For decades, progress followed Moore’s Law, the observation that the number of transistors on a chip roughly doubles every two years.

This constant miniaturization fueled faster computers and the growth of modern technology.

But physical limits are approaching. Some chip components are now only a few atoms thick. At that scale, shrinking further becomes extremely difficult.

As demand for computing power continues to grow, researchers are exploring alternatives, including quantum computing, neuromorphic chips, and now biological systems.

Biocomputing sits at the intersection of these efforts.

A New Industry Built on Living Cells

The idea of using neurons for computation is already moving beyond laboratory experiments.

Companies like FinalSpark are developing platforms that allow researchers to interact with brain organoids, tiny clusters of neurons grown from stem cells. Their system lets scientists connect remotely to living neural networks and run experiments through a cloud interface.

Another company, Cortical Labs, has even built a prototype processor that contains living neurons inside a specialized environment that feeds the cells and removes waste.

These early systems are expensive and difficult to scale, but they offer a glimpse of a future where computing hardware may include biological components.

The Ethical Questions of Living Machines

Biocomputing also raises profound ethical questions.

Brain organoids are not full brains, and scientists generally believe they are far from anything resembling consciousness. Yet the possibility forces researchers to consider new boundaries.

If biological computing systems become more complex, questions about awareness, suffering, and responsibility may emerge.

For now, the field remains in its earliest stage. Scientists are still exploring what living neurons can actually do inside a computational system.

But one idea is already clear.

The future of computing might not be entirely artificial.

It could be partly alive.