Artificial intelligence is evolving quickly, but one of its biggest weaknesses has always been surprisingly simple. AI systems are excellent at mimicking patterns, yet they struggle to update their beliefs when new information appears.

Humans do this constantly. We adjust our assumptions based on fresh evidence without even thinking about it. But for large language models, that process is far more difficult.

Recently, researchers experimented with a new way of teaching AI how to adapt its thinking. The results suggest we may be entering a new phase of AI development.

Teaching AI to Update Its Beliefs

The challenge researchers explored is known as probabilistic reasoning. In simple terms, it means adjusting what you believe as new information arrives.

Imagine an AI travel assistant recommending flights. At first, it does not know what you value most. Do you care about price, travel time, or the number of stops? As you choose options, the assistant should gradually learn your preferences.

Humans naturally refine their understanding in situations like this. But when researchers tested modern AI models, they noticed something odd. The models improved slightly after the first interaction and then mostly stopped learning.

Instead of continuously updating their beliefs, they hit what researchers described as a learning plateau.

Borrowing an Idea from Mathematics

To understand what proper learning should look like, researchers compared these models to a simpler system built on Bayesian reasoning. This approach updates probabilities after every interaction using a mathematical rule.

Unlike language models, the Bayesian system consistently improved with each new signal. Every choice made by the user helped it refine its predictions.

Researchers then tried something clever. Instead of training AI only on final correct answers, they trained it to imitate the reasoning process used by the Bayesian system.

This method, sometimes called Bayesian teaching, allowed the models to observe how a rational system updates beliefs step by step under uncertainty.

The results were striking. After training, the models followed the optimal reasoning strategy roughly 80 percent of the time and demonstrated genuine belief updating during conversations.

Running Powerful AI on Your Phone

While improving reasoning is one part of the puzzle, another challenge is where AI actually runs.

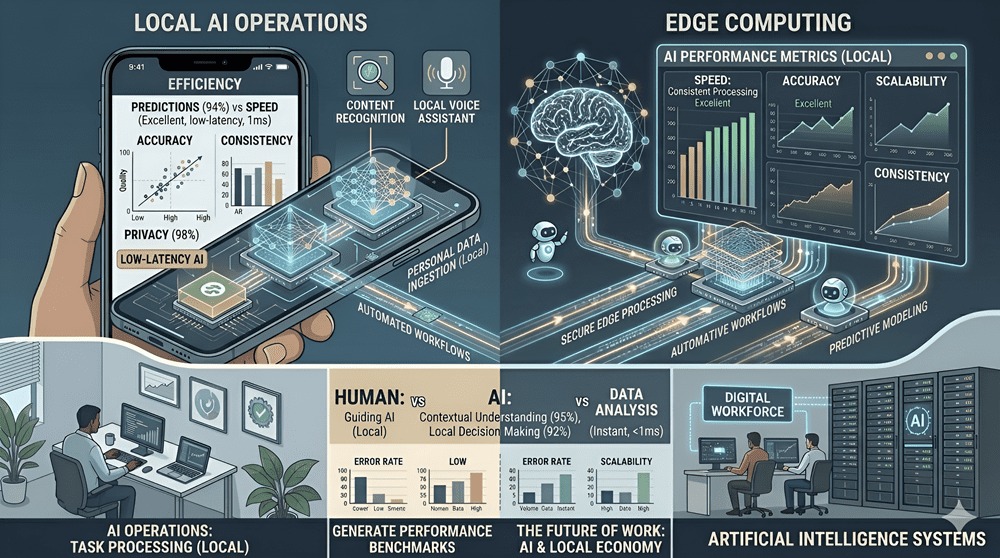

Traditionally, most AI processing happens in large data centers filled with powerful hardware. But new tools are making it possible to run more advanced models directly on personal devices.

Recent software improvements focus on efficiency. By compressing model data and optimizing how calculations run on mobile chips, developers can deploy sophisticated AI features without relying entirely on cloud servers.

This shift matters because it reduces latency, improves privacy, and lowers infrastructure costs. Instead of sending every request to remote servers, many tasks can be handled locally on phones or laptops.

The Rise of Autonomous AI Agents

At the same time, developers are experimenting with a different idea altogether. Rather than building assistants that simply suggest actions, new systems are designed to complete entire projects on their own.

These frameworks coordinate multiple specialized agents working together. One agent might gather research, another analyzes data, and a third generates visuals or presentations.

Instead of just writing instructions or code, the system can execute tasks directly within its own isolated computing environment. That means running scripts, generating files, and assembling final outputs automatically.

Persistent memory also allows these agents to remember user preferences and project history, turning them into long-term collaborators rather than one-time chatbots.

AI Workers Inside Companies

The next step may involve deploying these agents inside organizations.

Some companies are developing platforms that allow businesses to assign tasks to AI systems that operate similarly to digital employees. These agents could handle research, automate workflows, and support internal operations.

Security and reliability are major concerns, especially after early experiments revealed how easily autonomous systems can make costly mistakes. New enterprise-focused platforms aim to address these risks with stronger safeguards.

Together, these developments suggest something important. The future of AI may not arrive through a single dramatic breakthrough.

Instead, it will emerge from many smaller advances that gradually make machines better at reasoning, more efficient to run, and capable of handling real work.