I used to think of artificial intelligence as something purely digital. A question goes in, an answer comes out, and everything happens somewhere inside the cloud. It felt almost weightless.

But the truth is far more physical.

Every time I send a prompt to an AI system, real-world resources are being used. Electricity flows through enormous data centers. Powerful chips run billions of calculations. And surprisingly, a significant amount of water is involved, too.

The Drop Behind Every Question

According to some estimates, a single interaction with an AI chatbot might use roughly the equivalent of a small drop of water. That sounds trivial at first.

Ask a math question. One drop.

Request help writing an email. Another drop.

Search for a recipe tweak or a quick explanation of something simple. Another drop.

The scale changes when you remember how often these systems are used. With hundreds of millions of people interacting with AI every day, those drops quickly turn into something much larger.

Even small resource costs multiply when the number of interactions reaches billions.

Why AI Needs Water at All

The connection between artificial intelligence and water might seem strange at first. The reason lies inside the infrastructure powering modern AI.

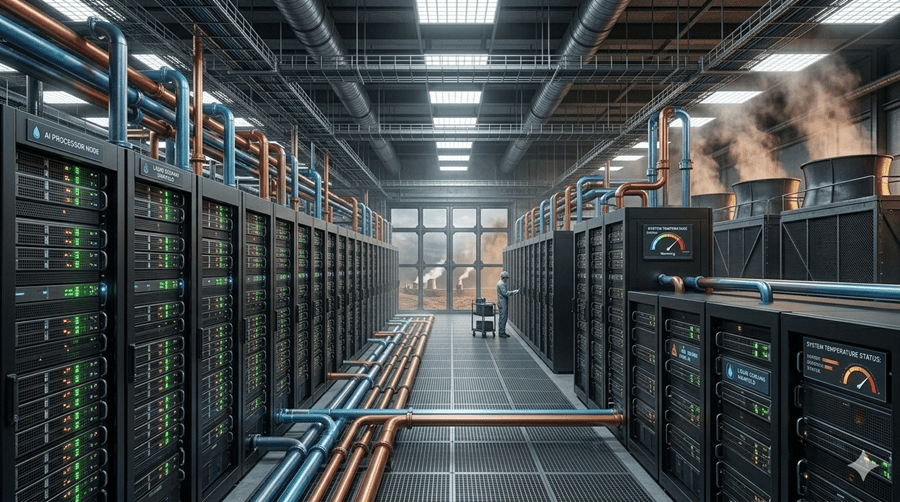

When I send a prompt, the request travels to massive data centers filled with specialized chips designed for machine learning. These processors handle intense computational workloads and generate a tremendous amount of heat.

If that heat is not managed properly, the hardware could be damaged.

Cooling systems are therefore essential. While traditional data centers relied mostly on air cooling, modern AI infrastructure increasingly uses liquid cooling systems that rely on water.

Water helps carry heat away from the processors and prevents them from overheating.

How Cooling Systems Work

Inside many facilities, a liquid coolant flows across the servers and absorbs heat from the chips. That heated liquid then moves to a heat exchange system where water is used to reduce its temperature.

The water itself eventually travels to cooling towers. There, heat is released into the atmosphere through evaporation and airflow.

A large portion of that water evaporates during the process and cannot be reused. In some cases, most of the water involved in cooling eventually disappears into the air as vapor.

This is why the digital world has a surprisingly physical footprint.

The Ripple Effect Beyond Data Centers

Cooling systems are only one part of the equation.

Generating the electricity that powers AI infrastructure often requires additional water, especially when power plants rely on steam-driven turbines. Manufacturing the chips used in AI hardware also requires large amounts of purified water during semiconductor production.

In other words, water appears at nearly every stage of the AI supply chain.

From chip fabrication to electricity generation to data center cooling, the technology depends heavily on resources that come directly from the natural world.

A Young Technology With Big Questions

Artificial intelligence is still a relatively new industry. Its capabilities have grown at an astonishing pace, but its environmental footprint is only beginning to receive serious attention.

Technology companies are already experimenting with ways to reduce water consumption. Some are testing cooling systems that minimize evaporation, while others are exploring new locations for data centers where cooling can be more efficient.

There is also interest in reusing the heat produced by data centers to warm nearby buildings.

The AI revolution is moving fast, and society is still learning what it truly costs to run it. Understanding those costs may become just as important as celebrating the technology itself.