For years, I assumed improving artificial intelligence was mostly about clever algorithms. Smarter architecture, better training tricks, maybe a new mathematical breakthrough. But the deeper I looked into modern AI systems, the more surprising the truth became. Much of their progress follows something simpler and far more mysterious: scaling laws.

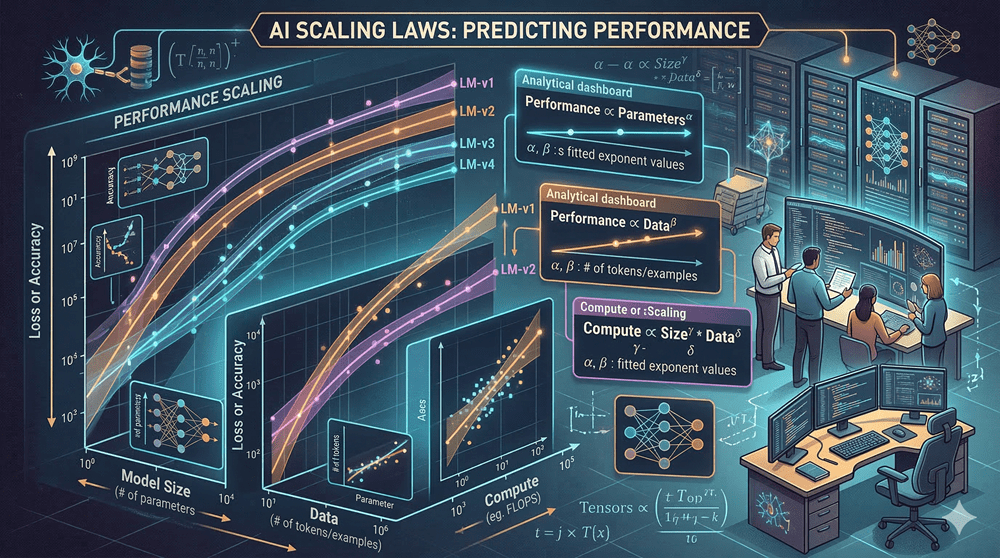

These laws suggest that as we increase three things, data, compute, and model size, performance improves in a remarkably predictable way. And the strange part is that this pattern appears almost universal across many neural networks.

Yet one question keeps bothering me. Why does this relationship exist at all?

The Boundary AI Models Cannot Cross

When training large neural networks, the error rate drops quickly at first. As training continues, improvement slows and eventually levels off. If we build a larger model, the error falls again, but only after using more computational power.

When researchers plotted these results on logarithmic graphs, something fascinating appeared. Different experiments formed a family of curves that approached a boundary. No model seemed able to cross this line. It became known as the computationally efficient frontier.

In simple terms, it marks the most efficient performance a model can achieve for a given amount of computation.

Even more intriguing, this pattern appears consistently whether we scale compute, dataset size, or model parameters. As long as the architecture is reasonably well designed, the same scaling behavior emerges.

That consistency raises a profound question. Are we observing a temporary trend in deep learning, or something closer to a fundamental law of intelligent systems?

How Scaling Transformed AI

In 2020, researchers demonstrated these trends clearly in large language models. They showed that performance follows a power law. On logarithmic graphs, this relationship becomes a straight line. The slope of that line tells us how quickly performance improves as we scale.

Later that same year, a massive experiment tested the prediction. A language model with 175 billion parameters was trained using enormous computing resources. Its performance landed almost exactly where the scaling law predicted.

What mattered most was not just the improvement itself, but how predictable it was.

This realization changed strategy across the AI industry. Instead of searching endlessly for new architectures, many teams began focusing on scaling existing ones.

Why Perfect AI May Be Impossible

At first glance, it might seem that scaling indefinitely could drive error rates all the way to zero. But reality is more complicated.

Language models learn by predicting the next word in a sequence. In many cases, there is no single correct answer. Natural language contains uncertainty. Multiple words may all be valid continuations of the same sentence.

This uncertainty is called the entropy of language. Because of it, even a perfect model cannot achieve zero error. The best it can do is assign high probability to several realistic possibilities.

In other words, the limitation is not just in the model. It exists in the data itself.

The Geometry Hidden in Data

Another clue comes from something called the manifold hypothesis. It proposes that real-world data does not fill high-dimensional space randomly. Instead, meaningful data lies on lower-dimensional structures embedded within it.

Imagine handwritten digits represented as points in a space with hundreds of dimensions. Most points would look like noise. But the actual digits cluster along hidden surfaces that represent valid patterns.

Deep learning models appear to be learning the shape of these hidden surfaces.

As we provide more data and larger models, they resolve these structures with increasing precision. This may explain why performance improves according to simple mathematical scaling.

A Clue Toward a Theory of Intelligence

Scaling laws have guided astonishing progress in just a few years. From early language models to systems with trillions of parameters, the predictions have remained surprisingly accurate.

But they still do not explain everything. Certain abilities, such as reasoning or problem solving, sometimes appear suddenly as models grow larger. These behaviors do not always follow smooth curves.

So the mystery remains.

Scaling laws may be the first glimpse of deeper principles governing intelligent systems. If that is true, we might be witnessing the early steps toward a unified theory of artificial intelligence.