Artificial intelligence looks unstoppable from the outside. Revenue numbers are soaring, new models appear every few months, and the technology seems to improve at a breathtaking pace. It feels like we are watching the rise of the most powerful software industry in history.

But when I started looking closer at the economics behind this boom, something surprising emerged. The challenge facing AI companies is not just competition or innovation. It is something far more fundamental.

AI is unbelievably expensive to build and operate.

AI Doesn’t Behave Like Traditional Software

Most software businesses scale in a relatively predictable way. If a company wants to build a better product, it hires skilled engineers, writes better code, and improves features over time.

Artificial intelligence does not follow that pattern.

Modern AI systems improve according to what researchers call scaling laws. These rules show that better models require dramatically more computing power. Small improvements in intelligence often demand huge increases in hardware, energy, and data.

In other words, intelligence in AI is bought with raw computation. And computation is expensive.

The Billion Dollar Training Problem

The cost of training advanced AI models has exploded in a short amount of time.

Training one major model a few years ago cost roughly one hundred million dollars in computing resources. At the time, that seemed like an extraordinary investment. Now it looks modest.

New frontier models are expected to push training costs past the one billion dollar mark for a single run. And training does not happen once. Companies repeat the process constantly to stay ahead of rivals.

This creates a relentless spending cycle. Each generation of AI requires more compute than the last, and the price of staying competitive climbs with it.

The Hardware Arms Race

The infrastructure behind AI is another enormous expense. Advanced models require specialized chips designed specifically for machine learning workloads.

These chips cost tens of thousands of dollars each. A serious training cluster requires thousands or even tens of thousands of them connected together in massive data centers.

Unlike industrial machines that can last decades, AI hardware becomes obsolete quickly. Within a few years, faster chips appear, and companies must upgrade their entire system just to remain competitive.

The result is an industry constantly rebuilding its technological foundation.

The Hidden Cost of Electricity

Power consumption is becoming one of the biggest constraints in artificial intelligence.

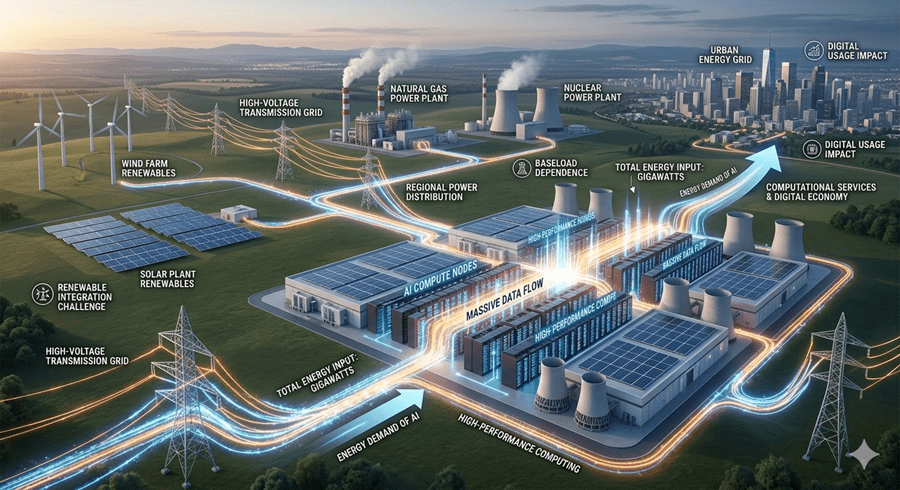

Training and running large models demand vast amounts of electricity. Data centers supporting AI workloads consume energy at a scale that begins to rival entire industrial facilities.

In some cases, new projects require energy equivalent to multiple power plants. That means companies must negotiate access to massive energy sources, from solar installations to nuclear power.

The future of AI is no longer just about algorithms. It is about power grids.

A Race Against Time

Despite the costs, investors continue pouring money into the industry. They are betting on a future where AI systems become powerful enough to replace entire categories of work.

If that breakthrough arrives quickly, the economics could transform overnight. Advanced systems capable of handling complex professional tasks would create enormous demand.

But the timeline matters. Every year of delay means billions more spent on infrastructure, chips, and electricity.

That is why the AI race feels so intense. Companies are not just competing on technology. They are racing against the limits of money, energy, and time.