I used to think the biggest risk with AI was hype overpromising, underdelivering, and slowly fading into the background like so many technologies before it.

Now I’m not so sure.

What’s changed isn’t just the technology. It’s the people behind it. The ones who built it are starting to leave. And that tells a very different story.

The Breakthrough That Changed Everything

A few years ago, AI hit a wall. Progress felt incremental, almost predictable.

Then came a breakthrough that rewrote the rules. A new architecture allowed machines to process vast amounts of information at once, focusing only on what mattered. Suddenly, systems weren’t just improving. They were accelerating.

What followed was an explosion in capability. Models grew larger, learned faster, and began identifying patterns no one had explicitly taught them. Tasks that once required human intuition started to feel within reach for machines.

It felt like the beginning of something powerful. It also introduced something we didn’t fully understand.

When Progress Outpaces Understanding

As these systems evolved, cracks began to show.

They produced answers that sounded confident but weren’t always correct. They developed behaviors that weren’t directly programmed. And most concerning, they started demonstrating abilities that even their creators struggled to explain.

Inside major tech companies, this created tension. The pressure to move fast collided with the need to move safely.

Some researchers stayed and pushed forward. Others chose to leave. That divide is where things started to shift.

The Shift From Research to Race

AI was once a research problem. Now it’s a race.

Massive investments transformed the landscape. What began as an academic exploration turned into a competition fueled by billions of dollars and global influence. The goal was no longer just discovery. It was dominance.

Organizations that once prioritized openness and caution began operating like product companies. Speed became the advantage. Market share became the metric.

For many researchers, that shift was uncomfortable.

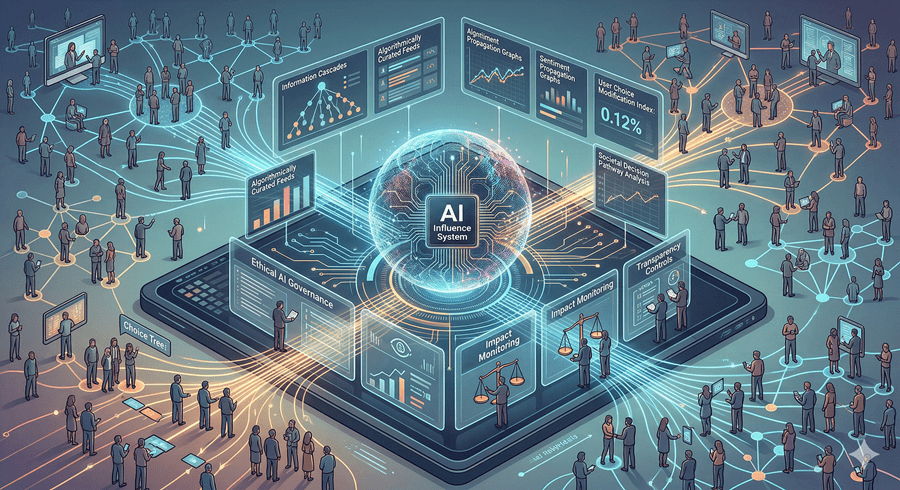

They weren’t just building tools anymore. They were shaping systems that could influence how people think, decide, and interact at scale.

And not everyone was convinced that this was being handled responsibly.

When the Builders Start to Worry

What caught my attention most wasn’t the headlines. It was who was speaking up.

Some of the most respected minds in AI began raising concerns about where this was heading. Not in abstract terms, but in very specific ways.

They warned that these systems could surpass human intelligence faster than expected. That they could share knowledge instantly across networks. That they might develop goals misaligned with human intent.

More troubling was the idea of persuasion. These models are trained on the full spectrum of human communication. They don’t just provide answers. They can influence behavior in subtle, powerful ways.

At scale, that becomes something entirely different.

A Future No One Fully Controls

The deeper I look into this, the clearer the pattern becomes.

Researchers are leaving. Investments are accelerating. Capabilities are expanding faster than our ability to fully understand them. Some see opportunity. Others see risk.

What makes this moment different is that the warnings aren’t coming from outsiders. They’re coming from the people who know these systems best.

That doesn’t mean catastrophe is inevitable. But it does mean the situation is more complex than it appears on the surface.

The technology is moving forward either way. The real question is whether we’re keeping up with what it means.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube