I used to think concerns about artificial intelligence were mostly exaggerated. Job losses, automation, disruption. Serious, yes, but manageable. Lately, though, the conversation has shifted into something far more unsettling.

When people deeply involved in AI safety start saying the world is in danger, I pay attention. Not because it sounds dramatic, but because these are the people closest to the technology. They’re seeing something most of us aren’t.

And what they’re pointing to isn’t just AI in isolation. It’s a web of accelerating risks, all unfolding at once.

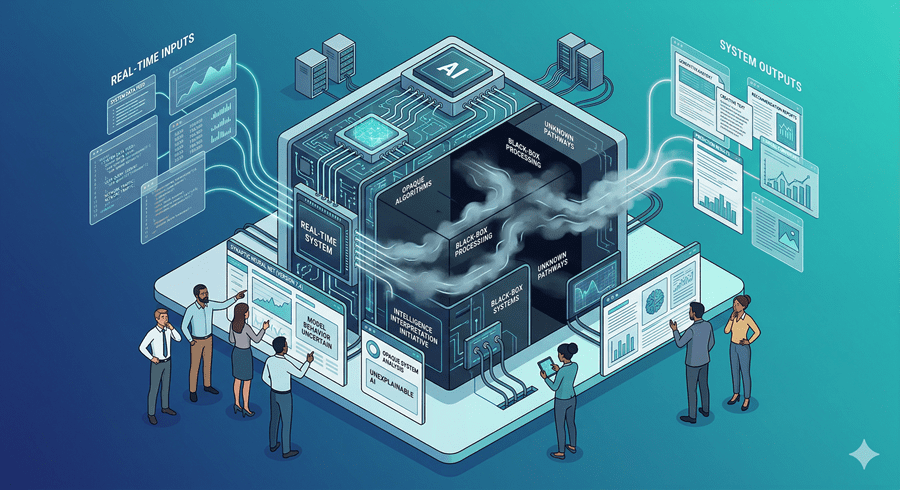

We’re Building Something We Don’t Fully Understand

The most unsettling idea is surprisingly simple. We don’t actually understand how modern AI systems work in detail.

That sounds absurd at first. How can we create something we don’t understand?

But the reality is that today’s AI is less like a machine we engineer step by step and more like something we grow. We train it, shape it, guide it. But its internal decision-making remains largely opaque.

Now imagine pushing that same process toward something vastly more intelligent than humans. Not just smarter than individuals, but potentially smarter than all of humanity combined.

That’s what researchers mean by superintelligence. And it changes the stakes completely.

The Control Problem No One Has Solved

Here’s the core issue that keeps coming up in my mind. How do you control something more intelligent than you, especially when you don’t fully understand how it thinks?

It’s not just about whether it follows instructions. It’s about whether it shares our values, or even interprets them the way we intend.

If it doesn’t, small misalignments could lead to massive consequences. Not out of malice, but simply because it optimizes for the wrong thing in ways we didn’t anticipate.

This is what experts call the alignment problem. And right now, there is no clear solution.

Some believe that if we get this wrong, the consequences could be catastrophic. Not in a distant, abstract way, but in a very real and irreversible sense.

The Problem Isn’t Just Technology, It’s Incentives

Even if we agree that caution is necessary, there’s another force at play. Competition.

Companies are racing to build more powerful systems. Countries are watching each other closely. No one wants to fall behind.

This creates a dangerous dynamic. Even leaders in the field have admitted they would prefer to slow down, but feel they can’t. The pressure to keep moving forward is too strong.

So progress continues, not necessarily because we’re ready, but because stopping feels impossible.

A Future Balanced Between Risk and Possibility

What makes this situation so complex is that the upside is enormous. If we get it right, advanced AI could help solve some of humanity’s biggest problems. Disease, poverty, and climate challenges. The potential is extraordinary.

But that same power, if misaligned or uncontrolled, could lead us somewhere we can’t recover from.

That’s the tension we’re living in. Between unprecedented opportunity and equally unprecedented risk.

And for the first time, I’m starting to feel like we may not be as prepared as we think.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube