I used to think progress in AI meant one thing: make models bigger. More parameters, more compute, more everything. That was the pattern. But this new approach completely flipped that assumption on its head.

Instead of scaling endlessly, the breakthrough came from cutting things away. And somehow, that made the system faster, smarter, and more efficient.

Rethinking Bigger Is Better

At first glance, a trillion-parameter model sounds like the peak of scale. But what caught my attention was not its size. It was what happened during training.

The model actually started even larger. Then, as it learned, it began shedding parts of itself. Roughly a third of its parameters were removed before it even finished training.

What surprised me most was the result. Training became nearly 50% more efficient. Performance did not drop. In some cases, it even improved.

This challenges the idea that every parameter matters. Clearly, some parts of these massive systems are doing very little.

How Mixture of Experts Changes Everything

The architecture behind this is called a mixture of experts. Instead of one giant network handling every task, the system is split into many smaller specialists.

When a piece of input comes in, only a few of these experts are activated. It is like assigning a problem to the most relevant people instead of asking everyone to contribute.

This design allows models to scale massively without using all their power at once. But it introduces a hidden issue. Not all experts are equally useful.

Over time, a small group ends up doing most of the work, while others barely contribute.

Cutting the Dead Weight in Real Time

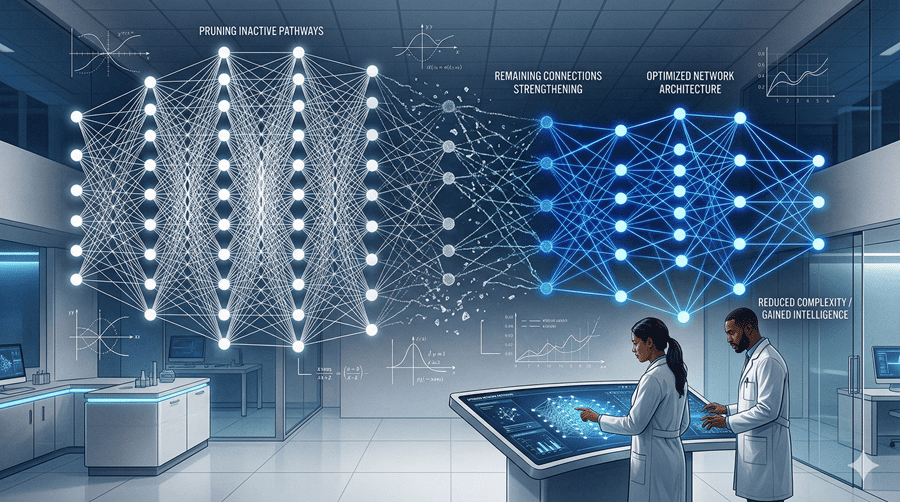

Here is where things get interesting. Instead of waiting until training is done, the system removes weak experts during training itself.

It tracks how often each expert is used. If an expert consistently handles very little work, it becomes a candidate for removal. Once certain conditions are met, it is simply cut out.

This process keeps the model focused. The remaining experts become more specialized and effective.

What I find fascinating is that this is not just pruning for efficiency. It is shaping the intelligence of the system as it learns.

Balancing the System Behind the Scenes

There was another problem to solve. These models run across many GPUs, and uneven workloads can slow everything down.

Some experts receive too many tasks, overloading certain machines, while others sit idle.

To fix this, the system constantly redistributes experts across hardware. High-demand experts are separated, and lighter ones are grouped strategically.

This balancing act significantly boosts performance. Efficiency gains came not just from removing unused parts, but also from better coordination of what remained.

Teaching AI to Think Less, Not More

One final insight stood out to me. More reasoning is not always better.

AI systems often overthink, producing long and unnecessary chains of thought. To counter this, the training process rewards concise answers.

If the model solves a problem with fewer steps, it gets a higher reward. If it overcomplicates things, it is penalized.

The result is a system that knows when to think deeply and when to keep it simple. Accuracy improves, while responses become shorter and more efficient.

This feels like a shift in philosophy. Instead of building larger minds, we are learning how to build sharper ones.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube