The most important part of Google’s latest AI rollout is not any single model upgrade. It is the shift in interface design.

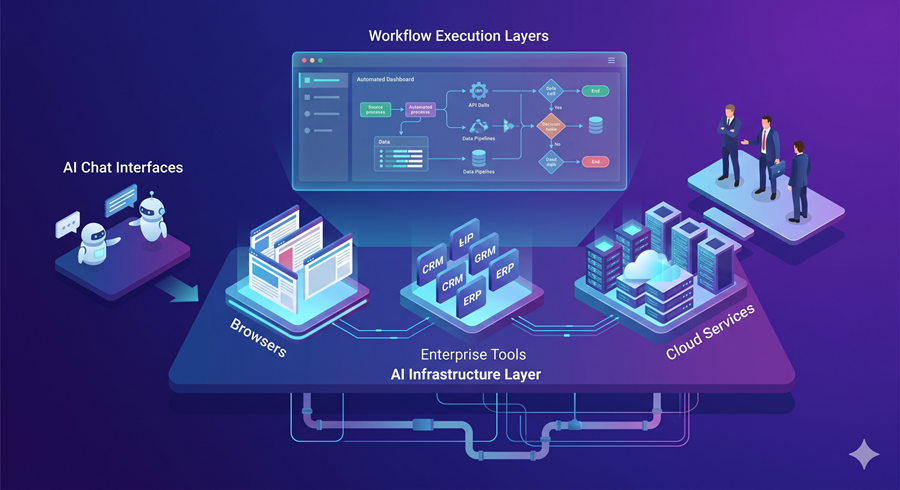

Across Chrome, Gemini Enterprise, NotebookLM, DeepMind, and Google Research, the pattern is the same: AI is being turned from a prompt-response tool into a structured execution layer. That is the real story. Google is no longer just improving assistants. It is assembling the infrastructure for AI workflows.

Chrome Skills Turns Prompts Into Systems

The most underrated release may be Skills in Chrome. On the surface, it looks simple: save a prompt and reuse it across pages. In practice, it is much more important than that.

This turns the browser into a lightweight agent environment. Reusable prompts become workflows. Multi-tab execution becomes retrieval. Prompt libraries become native browser utilities. What used to require prompt engineering systems or orchestration frameworks is now becoming part of the default interface.

That shift matters because it moves workflow automation out of developer tooling and into everyday user behavior.

Gemini Enterprise Is Starting to Look Like an Operating Layer

The new agent tab in Gemini Enterprise makes the direction even clearer. This is no longer just an assistant interface. It is beginning to resemble an execution workspace.

Goals, connected apps, files, task state, and human review controls are all signals that Google is building toward a more structured agent environment. Once AI can coordinate tools, track state, and request approval before acting, it stops behaving like chat and starts behaving like software.

That is the more important transition: AI is becoming less like a conversational layer and more like an operating layer.

NotebookLM and Robotics Point to the Same Strategy

NotebookLM is moving in the same direction. Canvas and connectors push it beyond summarization and into structured knowledge work. Instead of simply reading information, users can start turning source material into interactive outputs, visual systems, and reusable research environments.

On the robotics side, the same architectural pattern appears again. Gemini Robotics ER separates reasoning from execution. One model plans, interprets, and evaluates. Another acts. That division matters because it reflects the same systems logic emerging across Google’s software stack: reasoning becomes modular, execution becomes controllable, and AI becomes easier to supervise.

This is not just a robotics improvement. It is the same design philosophy applied to physical systems.

Google Is Building AI as Infrastructure

Vantage makes the broader strategy even more explicit. Google is not only building systems that generate output. It is building systems that evaluate behavior, guide interaction, and measure judgment.

That is a different category of AI product. It suggests Google is thinking beyond generation and toward orchestration, supervision, and assessment.

Taken together, these releases point to the same conclusion: Google is not simply making AI smarter. It is making AI operational.

The real shift is not better chat. It is the slow construction of AI as infrastructure.