For years, the race toward AGI has had a strange problem at its core.

Everyone claims to be building it. No one agrees on how to measure it.

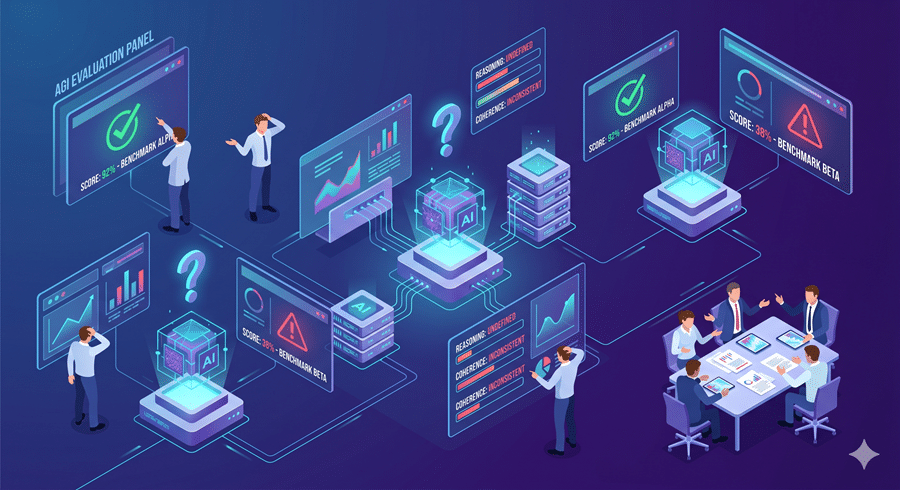

That has made the conversation around AGI unusually vague for something so consequential. Timelines are speculative, benchmarks are inconsistent, and every major lab seems to define the destination differently. Google’s latest framework is an attempt to fix that.

The Real Problem Was Never Just Building AGI

The biggest issue in AI has not been progress. It has been measured.

Current benchmarks are narrow, saturate quickly, and are often vulnerable to contamination. A model performs well, but it is difficult to tell whether it reasoned, memorized, or simply benefited from benchmark familiarity. That makes progress hard to interpret.

Google’s approach is more grounded. Instead of treating intelligence as one score, it breaks cognition into measurable components and evaluates systems across each of them.

Not one leaderboard. A full cognitive profile.

Intelligence Is Being Broken Into Parts

The framework splits intelligence into ten faculties. Perception, memory, learning, reasoning, attention, planning, social understanding, and more.

That matters because intelligence is not singular. It is composite.

A system may perform exceptionally in reasoning while remaining weak in memory or self-monitoring. Another may generate fluent output while lacking planning or adaptive learning.

This is a more useful way to evaluate capability because it reveals where systems are genuinely strong and where they only appear competent. That distinction is becoming increasingly important.

Why This Is More Useful Than Benchmark Theater

Most AI benchmarks reward narrow optimization. Models improve by learning the shape of the test, not necessarily the skill the test was meant to measure. That makes many current evaluations less useful than they appear.

This framework tries to solve that by comparing AI directly against human baselines across the same tasks, under the same conditions.

That creates something that current AI evaluation often lacks. Context.

Not just whether a model performs well, but how it performs relative to actual human cognition. That is a much more meaningful standard.

The Most Important Shift Is Conceptual

What makes this significant is not just the test design. It is the shift in framing.

Google is treating AGI less like a product milestone and more like a scientific measurement problem. That changes the conversation.

It moves AGI away from branding and toward falsifiability. Away from demos and toward cognitive evidence. Away from claims and toward profiles.

That is how the discussion becomes harder to manipulate.

Why This Matters Now

AI is becoming more capable, but also harder to interpret.

It can appear brilliant in one domain and fail absurdly in another. Without better measurement, capability gets mistaken for generality. That is the real risk.

Before AGI becomes a deployment problem, it first has to become a measurement problem solved well enough to trust what we are actually seeing.

This framework does not settle the AGI debate, but it does make it harder to hide behind vague definitions.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube