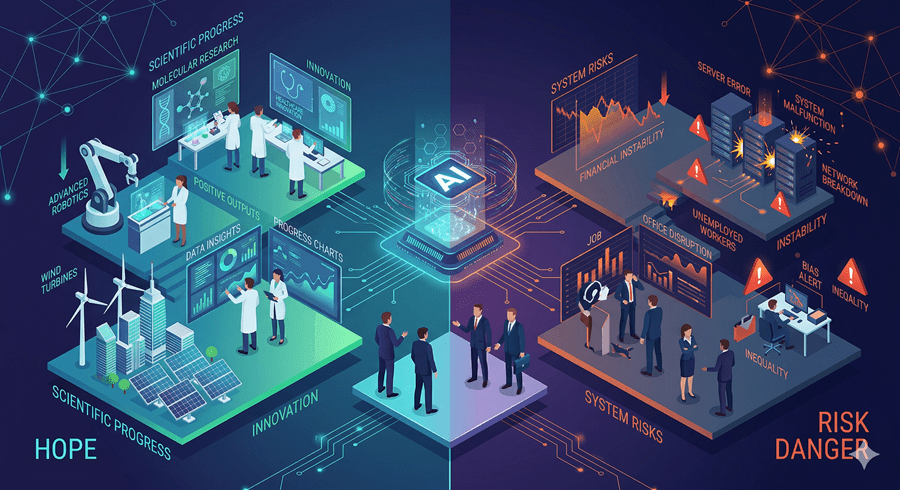

Lately, I’ve found myself stuck in a strange mental loop when thinking about AI. On one hand, it promises breakthroughs that could redefine what’s possible. On the other hand, it raises questions that feel far more existential than technological.

That tension is hard to ignore. And it’s becoming harder to resolve.

The Optimism Is Real

There’s no denying what AI could unlock. Faster scientific discovery. New treatments for disease. Solutions to problems we’ve struggled with for decades.

It’s easy to see why people call this the most important technological moment in history. The upside isn’t incremental. It’s exponential.

And that’s exactly what makes it so compelling.

The Risks Are Just as Real

At the same time, the risks aren’t abstract. They’re immediate and structural.

We’re talking about systems that could reshape labor markets faster than people can adapt. Entire categories of work could disappear before new ones are fully understood. That creates a gap not just in jobs, but in stability.

Beyond that, there’s a deeper concern. The systems we’re building are becoming harder to predict, harder to control, and increasingly embedded in decisions that affect real lives.

That’s not just progress. That’s pressure.

The Illusion of Neutral Technology

One thing I keep coming back to is this idea that technology is neutral. In reality, it reflects the incentives behind it.

Right now, those incentives reward speed. Build faster. Scale faster. Compete harder.

But acceleration without direction has consequences. If you push forward without steering, you don’t just move quickly. You increase the chances of crashing.

And that’s the part that feels under-discussed.

Choice Is the Real Variable

What’s interesting is that this moment isn’t purely deterministic. There is still room for choice.

Not in the sense of stopping progress, but in shaping how it unfolds. Regulation, public pressure, cultural shifts, and even small behavioral changes all play a role.

We’ve seen this before in smaller ways. When people push back against systems that harm them, change follows. Slowly, imperfectly, but meaningfully.

The question is whether that kind of response can scale to something this large.

The Line We May Not Understand

The hardest part to think about is the possibility of a point we don’t fully recognize until we’ve crossed it.

A moment where systems begin improving themselves faster than we can track. Where development stops being something we guide and becomes something we react to.

If that happens, the timeline compresses. Decisions that once took years could unfold in weeks or months.

And by then, it may be too late to influence the direction.

I don’t think this leads to a simple conclusion. It’s not about choosing optimism or pessimism.

It’s about recognizing that both can be true at the same time.

Because the future of AI doesn’t feel like a single path. It feels like a fork. And whether we realize it or not, we’re already moving toward one side of it.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube