The AI landscape is shifting in two very different but equally important ways. On one side, I see companies tightening control over their core technologies. On the other hand, I see systems beginning to improve themselves with minimal human input. Both directions point to a future where AI is not just powerful, but far more independent.

Why Ownership Suddenly Matters More

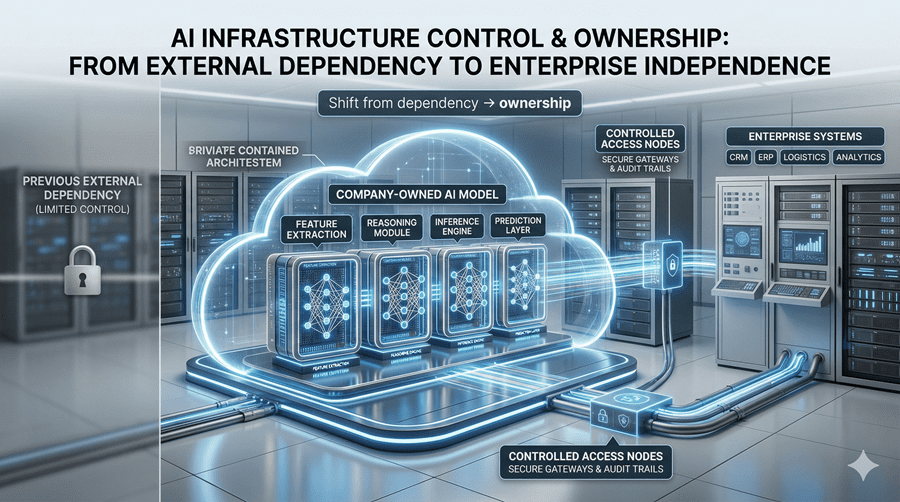

For a long time, even major players relied on external partners for key capabilities. That model worked, but it came with limits. When you depend on someone else’s system, you also depend on their pace, priorities, and constraints.

Now, I am noticing a clear move toward building in-house models. The goal is not just better performance, but control. Owning the model means faster updates, tighter product integration, and more flexibility in shaping how the technology evolves. It also changes the economics, allowing companies to optimize costs instead of inheriting them.

This shift feels less like a feature upgrade and more like a strategic reset.

The Quiet Breakthrough in Image Generation

What stands out most in the latest developments is not just visual quality, but usability. Image generation is finally becoming practical for real-world work.

One key improvement is how well text appears inside generated images. This has been a persistent weakness for years. Clean typography in posters, menus, or presentations is used to break easily. Now, it is becoming reliable enough to use in everyday workflows.

At the same time, realism has improved significantly. Lighting, textures, and human features are starting to feel natural rather than artificial. That reduces the need for post-editing, which has always been a hidden cost in creative workflows.

Still, limitations remain. Early versions often come with restrictions like limited formats, slower generation speeds, and missing features. It is progress, but not perfection.

From Generating Content to Controlling Workflows

Beyond individual outputs, the bigger evolution is happening in workflows. I am seeing tools that no longer just generate images or clips, but guide the entire creative process from start to finish.

Instead of piecing things together across multiple platforms, everything now happens in one place. You define characters, set environments, generate scenes, control motion, and even finalize visual tone without leaving the workspace.

This shift matters because creation is not just about assets. It is about continuity, control, and consistency across the entire process.

The Rise of Self-Evolving Systems

While one side focuses on ownership, the other is pushing something more radical. Systems that actively improve themselves.

These models do not just execute tasks. They analyze their own performance, identify weaknesses, and refine the way they operate. I find this especially powerful in engineering workflows, where complexity is high, and iteration is constant.

In some cases, these systems can debug production issues, trace root causes, and suggest fixes in minutes. That goes beyond assistance. It starts to resemble decision-making.

Even more interesting is the feedback loop. The system builds its own evaluation sets, tests changes, and decides what works. Over time, it becomes better not just at tasks, but at learning how to improve.

Where This Is All Heading

What I see emerging is a split path that eventually converges. On one side, tighter control over AI infrastructure. On the other hand, increasing autonomy within the systems themselves.

Together, they point toward a future where AI is both deeply integrated and increasingly self-directed. That combination could redefine how work gets done across industries.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube