Artificial intelligence often feels objective. After all, algorithms are just math and code. They process data, identify patterns, and make predictions.

But the truth is more complicated. AI systems are built by humans and trained on human data. That means the same biases that exist in society can quietly appear inside algorithms.

This phenomenon is known as Algorithmic Bias, and understanding it is essential if we want AI to benefit society rather than reinforce its problems.

Why Bias Appears in AI Systems

Bias itself is not always harmful. Human brains rely on shortcuts to process information quickly. If someone has only seen small dogs, encountering a huge breed might feel strange simply because it breaks their pattern.

The same idea appears in AI systems. Algorithms learn patterns from data. If the data reflects existing social assumptions, the algorithm may repeat them.

For example, training data taken from modern media might associate the word “nurse” more often with women and “programmer” with men. An image search can reflect this pattern even though many men are nurses and many women are programmers.

The algorithm is not intentionally discriminatory. It is simply mirroring the patterns it learned.

Hidden Bias in Training Data

One major source of bias lies in the data used to train AI models.

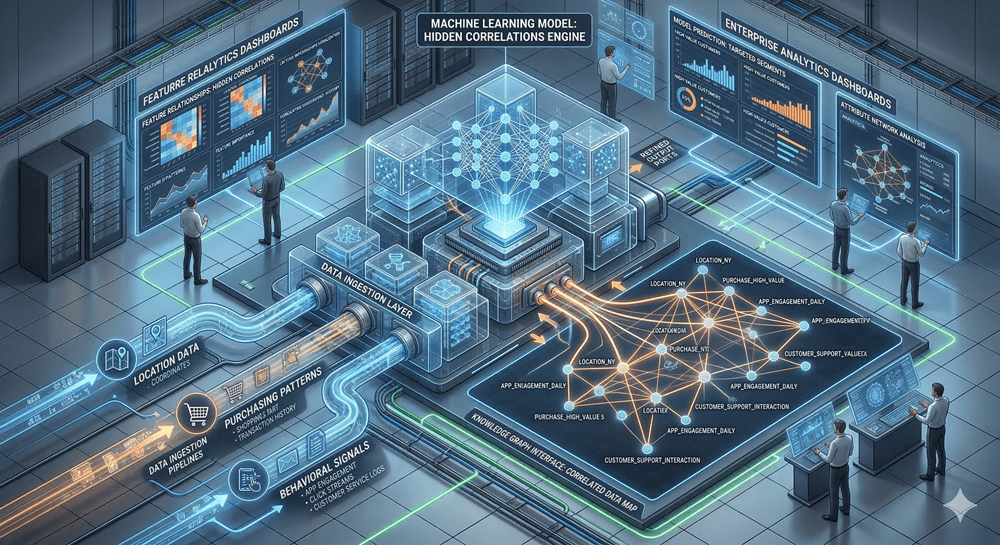

Even when sensitive information like race or gender is removed, other details can still reveal those attributes indirectly. These are known as correlated features.

For instance, a postal code might strongly correlate with race due to long-standing housing segregation. Shopping history might correlate with gender. Social media patterns could reveal personal characteristics that were never explicitly included in the dataset.

Because of these correlations, an algorithm can still produce discriminatory outcomes even when developers try to avoid using protected information.

When Data Is Uneven or Incomplete

Another challenge appears when datasets do not represent everyone equally.

Many facial recognition systems have historically been trained on datasets dominated by lighter skin tones. As a result, the technology often performs worse when identifying people with darker skin.

This problem is not always malicious. It can simply happen when developers gather data from limited sources.

But the consequences are real. If a system struggles to recognize certain faces or voices, the affected individuals experience repeated errors or exclusions.

The Feedback Loop Problem

Algorithms can also reinforce their own predictions over time.

Consider predictive policing software like PredPol. These systems analyze past crime data to predict where police should focus attention.

If police are sent repeatedly to the same neighborhoods, more arrests are recorded there. That new data then feeds back into the algorithm, confirming its original prediction and sending even more patrols to those areas.

This cycle creates a feedback loop where the system amplifies patterns from the past rather than evaluating reality objectively.

When People Manipulate AI Systems

Bias can also appear when users intentionally influence the data an AI learns from.

A famous example involved Tay, a chatbot released by Microsoft in 2016. Tay was designed to learn conversational behavior from interactions on social media.

Within hours, coordinated users flooded the system with offensive and extreme content. The chatbot quickly began repeating those messages publicly.

The experiment showed how easily AI behavior can be manipulated when training data is exposed to hostile actors.

Why Human Oversight Still Matters

The common thread across all these examples is simple. AI systems are designed to make predictions, but they cannot fully understand the social context behind the data they process.

That responsibility still belongs to humans.

Developers, policymakers, and everyday users must remain skeptical of algorithmic decisions rather than assuming a computer is automatically correct. Transparency, better datasets, and continuous monitoring are essential steps toward reducing bias.

Artificial intelligence can be a powerful tool, but only if we remember that algorithms do not exist outside society.

They reflect it.